The Brief

Anthropic's Claude Mythos Leak Reveals a New Model Tier. Arm Ships Its First Chip. China Bars Manus AI Executives From Leaving the Country.

It definitely feels like we’ve crossed some agentic threshold in the past few months. A build that would have taken me 4 to 6 weeks say, 5 years ago now takes me under five minutes. Six months ago, the same task was still a one to two hour affair with plenty of debugging.

That’s a pretty significant phase change that I’m not sure we’ve fully grappled with yet. This collapse of the distance between idea and working product will rewrite entire industries. It is a step change in the tools that humans will use to build, create, and solve problems.

On a related note, OpenClaw has gotten meaningfully more stable since the OpenAI acquisition. There is a clear path for it to become one of the most important open-source projects in AI for the long haul.

Now, onto the week.

The Download

What We’re Reading This Week

Anthropic’s Claude Mythos Leak Reveals a New Model Tier

Anthropic exposed details of an unreleased model called Claude Mythos through a CMS misconfiguration. The leaked draft describes a new “Capybara” tier above Opus with major advances in coding, reasoning, and cybersecurity capabilities. Anthropic confirmed it is testing the model with early access customers and called it “a step change” and “the most capable we’ve built to date.” (Fortune, The Decoder)

Why it matters: Two things matter here beyond the model itself. First, the leaked draft warns that the model’s cybersecurity capabilities are “far ahead of any other AI model,” which moved cybersecurity equities in a single session. Second, the introduction of a fourth model tier (Capybara above Opus) signals Anthropic is building pricing headroom for enterprise, not just performance headroom for benchmarks.

Claude Code Is Becoming Anthropic’s Core Growth Engine

Claude Code now accounts for roughly 4% of all public GitHub commits and is on a trajectory to reach 20%+ by year end. Anthropic’s overall revenue run rate has reached an estimated $14 billion, with Claude Code’s standalone run rate at approximately $2.5 billion. The tool has crossed over from developer adoption into non-technical users learning terminal commands to build with it. (SemiAnalysis, Uncover Alpha, VentureBeat)

Why it matters: Claude Code is compressing customer acquisition costs to near zero through organic developer adoption. The expansion into non-developer roles via Cowork extends the addressable market well beyond the 28 million professional developers globally.

Cheng Lou’s Pretext: Text Layout Without CSS

Cheng Lou, one of the more influential UI engineers of the last decade (React, ReasonML, Midjourney), released Pretext, a pure TypeScript text measurement algorithm that bypasses CSS, DOM measurements, and browser reflow entirely. The demos: virtualized rendering of hundreds of thousands of text boxes at 120fps, shrinkwrapped chat bubbles with zero wasted pixels, responsive multi-column magazine layouts, and variable-width ASCII art. T (X post)

Why it matters: Text layout and measurement has been the quiet bottleneck holding back a new generation of UI. CSS was designed for static documents, not the fluid, AI-generated, real-time interfaces that are becoming the norm. If Pretext delivers on the demos, it removes one of the last foundational constraints on what AI-native interfaces can look and feel like.

Arm Ships Its First Chip in 35 Years

Arm unveiled the AGI CPU, a 136-core data center processor on TSMC 3nm, co-developed with Meta. This is the first time in the company’s history that Arm has sold finished silicon rather than licensing IP. OpenAI, Cerebras, and Cloudflare are launch partners, with volume shipments expected by end of year. (Arm Newsroom, EE Times)

Why it matters: Current AI data centers are GPU-heavy. The GPU trains and runs the model, and the CPU mostly manages data flow and scheduling. But agentic workloads are different. When thousands of AI agents are running simultaneously, each one coordinating tasks, calling APIs, managing memory, and routing data across systems, that orchestration work falls on the CPU. Arm claims this drives a 4x increase in CPU demand per gigawatt of data center capacity. (HPCwire, Futurum Group)

NVIDIA and Emerald AI Turn Data Centers Into Grid Assets

NVIDIA and Emerald AI announced a coalition with AES, Constellation, Invenergy, NextEra, and Vistra to build “flexible AI factories” that modulate compute load to participate in grid balancing services. The first facility, Aurora in Manassas, VA, opens in the first half of 2026. (NVIDIA Newsroom, Axios)

Why it matters: The biggest constraint on AI infrastructure buildout is not chips. It’s grid interconnection timelines, which run 3 to 5 years in most regions. Data centers that can demonstrate grid flexibility get connected faster and face less regulatory resistance. This reframes the energy question for AI infrastructure investors: the winning thesis is not “more power” but “smarter power.”

China Bars Manus AI Executives From Leaving the Country

What it is: Chinese authorities barred Manus CEO Xiao Hong and Chief Scientist Ji Yichao from leaving China after Meta’s $2 billion acquisition of the Singapore-based AI startup. The NDRC summoned both executives to Beijing this month and imposed travel restrictions pending regulatory review. (Reuters, Washington Post)

Why it matters: This is not a trade restriction. It is a personnel restriction. China might be signaling that AI talent with mainland origins is a controlled asset, regardless of where the company is incorporated.

A 400B-Parameter LLM Ran on an iPhone 17 Pro

An open-source project called Flash-MoE demonstrated a 400-billion parameter Mixture of Experts model running entirely on-device on an iPhone 17 Pro’s A19 Pro chip, using SSD-to-GPU weight streaming. The model (Qwen 3.5-397B, 2-bit quantized, 17B active parameters) ran at 0.6 tokens per second with 5.5GB of RAM to spare. (WCCFTech, TweakTown, Hacker News)

Why it matters: This is a proof of concept, not a product. The reason a 400B model can run at all on a phone with 12GB of RAM is that only a small fraction of the model is active at any given moment (Mixture of Experts), and the rest streams from the phone's internal SSD on demand rather than sitting in memory. But now apply that same trick to a much smaller model, say 7 or 14 billion parameters, on next-generation mobile chips with faster storage. You get genuinely usable, conversational-speed AI running entirely on the device, no cloud required.

AI Agents Autonomously Performed a Complete Particle Physics Experiment

MIT researchers published a framework called JFC (Just Furnish Context) demonstrating that LLM agents built on Claude Code can autonomously execute a full high energy physics analysis pipeline: event selection, background estimation, uncertainty quantification, statistical inference, and paper drafting. The system ran on open data from ALEPH, DELPHI, and CMS detectors. (arXiv 2603.20179)

Why it matters: This is one of the clearest demonstration that agentic AI can automate end-to-end scientific workflows in a domain with extremely high methodological rigor. The immediate investment implication is for the reanalysis of legacy datasets across physics, genomics, and materials science, where decades of archived data sit underexploited.

Deep Dive From The Review

Humanoid robots are the most demanding battery-powered machines ever built.

400 power spikes per charge. 80°C inside the torso. Discharge rates three to five times higher than an EV.

No battery was designed for this workload. Can current-day battery chemistry can keep up with humanoid ambition?

New piece by Strange Research Fellows Joy Yang and Mason Rodriguez Rand.

Please help me improve The Strange Review! I’d love your thoughts.

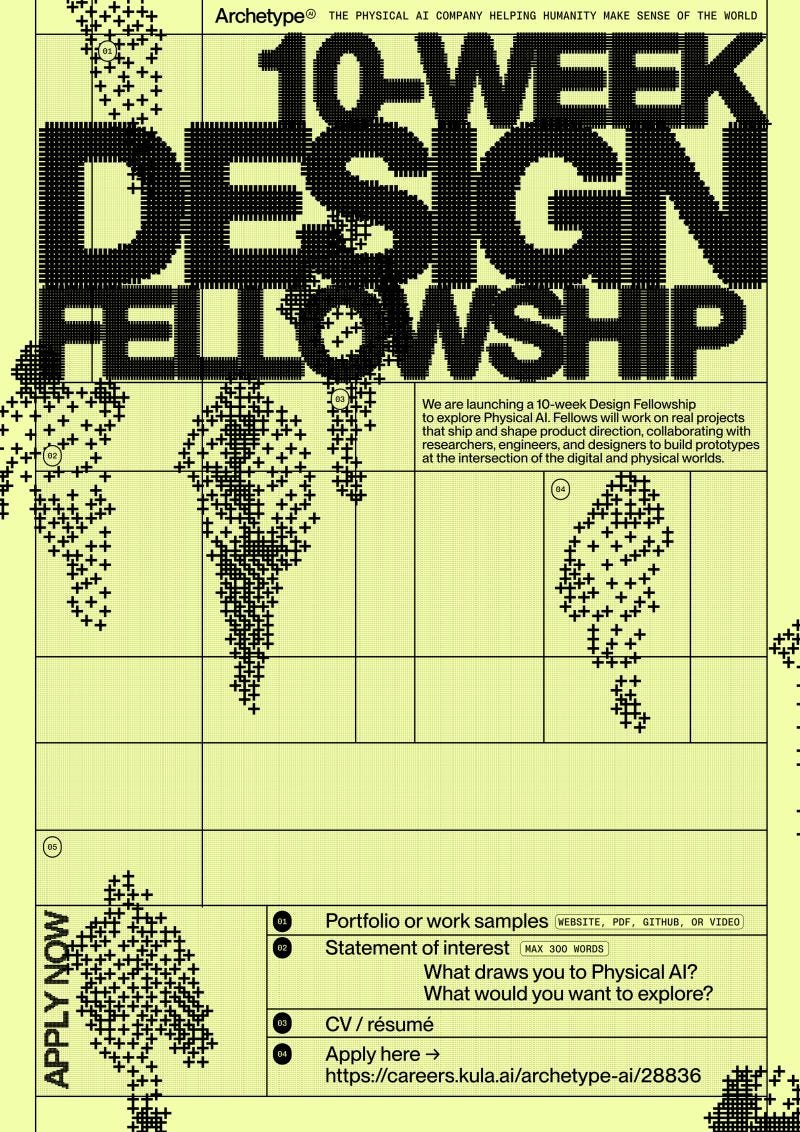

Physical AI company Archetype is hiring Design Fellows this summer to explore the future of AI interfaces beyond the screen. Apply here.