The Whale in the Room

Why the Vercel breach was an expected outcome of the current architecture, and where we could go next.

On Sunday, Vercel, a popular hosting platform that serves 2.4 million websites including OpenAI, Reddit, Discord, Anthropic, and Stripe, disclosed a major security breach.

The sequence of events:

1. A Context.ai employee got infected with Lumma Stealer malware in February 2026, which harvested their Google Workspace credentials.

2. Attackers used those credentials to compromise Context.ai’s Google Workspace OAuth application.

3. A Vercel employee had signed up for Context.ai’s “AI Office Suite” with their corporate Google account and granted it “Allow All” permissions. Using the compromised OAuth app, the attacker took over that Vercel employee’s Google Workspace account.

4. From the hijacked Workspace account, the attacker pivoted into Vercel’s internal systems, accessing environment variables that were not marked as “sensitive” which contained API keys, tokens, and database credentials.

5. A threat actor posted stolen data including source code, API tokens, and ~580 employee records on a hacking forum, demanding a bounty of $2 million.

The Vercel breach of April 2026 wasn’t a sophisticated zero-day. The hackers swam through a gate that was left open.

The narrative around AI agents has been about reasoning, planning, and orchestration frameworks. But some of the highest adoption risks in 2026 are none of those things. It is the OAuth token: the bearer credential that connects an AI agent to an enterprise’s email, files, databases, calendar, and code repositories.

Every agent that does real work in an enterprise environment does so through OAuth grants. And the infrastructure for managing those grants was designed twenty years ago for a world where the only thing requesting access was a human sitting in front of a browser.

To understand why this is the hardest problem, and why it’s a multi-billion dollar gap hiding in plain sight, we need to talk about SeaWorld.

The Killer Whale Heuristic

Imagine a marine park. The trainers are employees. The whales are AI agents. They are brilliant, autonomous, and capable of navigating complex environments to perform valuable work.

The problem isn’t the whale. The problem is the key ring.

When a trainer decides a whale needs access to a specific tank, they don’t cut a narrow, single-use key. They hand the whale a Master Key Ring and say, “Go swim freely.” The whale slips the keys over its fin and disappears into the lagoon.

Now, here’s the failure point: Park Security has no clipboard. They have perfect logs of which human trainers entered the locker room. But they have zero visibility into which whales are holding keys to the filtration system, the payroll office, or the baby dolphin nursery.

The Vercel compromise was identified through activity in a governed system, the employee’s Google Workspace account. The token itself was invisible to Vercel’s security team until after the breach occurred. Why? They were tracking the humans, not the whales.

Google’s OAuth consent model, until very recently, was all-or-nothing for most Workspace APIs: either the app gets the scope or it doesn’t. Google began rolling out granular consent screens in early 2025. The rollout is still incomplete, and for years before it began, the architecture made over-privilege the default outcome of a correctly functioning system. The Vercel employee's "Allow All" grant was not a mistake. It was the only option available. And the lifecycle problem is what made the compromise possible in the first place: the token remained active long after the employee’s routine use of Context.ai had ended.

But the deeper failure was not hinged just on the stale token. It was a malware infection at Context.ai, a third-party two hops removed from Vercel, that could propagate outward through the OAuth chain and into Vercel’s core systems.

Every link in the chain trusted the one before it. This can happen to anyone.

The OAuth chain is not the only load-bearing protocol with structural problems. Five days before the Vercel breach, OX Security published research on an architectural flaw in Anthropic’s Model Context Protocol (MCP), the emerging standard for connecting AI agents to external tools. It contains an architectural flaw that allows unsanitized commands to execute silently, enabling full system compromise.

OX identified up to 200,000 vulnerable instances across 150 million downloads, and in a white-hat attack, successfully executed commands on six production platforms, and poisoned 9 of 11 MCP registries with test malware.

Anthropic’s response? They declined to patch the protocol, calling the behavior “by design.“

Why The Infrastructure Broke

The natural response to the Vercel breach is to treat it as a security failure: someone should have rotated that token, someone should have scoped those permissions, someone should have reviewed Context.ai’s OAuth grants.

But the breach is an important story that highlights a protocol being used for something it was never designed to do.

OAuth was built in 2006 for a specific interaction: a human user, sitting at a browser, granting a web application limited access to their data on another service. The user is present. The application is pre-registered with a fixed set of capabilities. The token is issued for a defined scope. The entire authorization model assumes that the entity requesting access is a known application acting at a human’s explicit direction, and that a human will be present to make the access decision.

None of this describes an AI agent.

Agents run headlessly, on servers and in containers, with no browser to display a consent screen and no user present at execution time. Developers work around this by pre-authorizing tokens or hardcoding credentials. GitGuardian’s 2026 report found 28.65 million hardcoded secrets pushed to public GitHub in 2025, a 34% year-over-year increase, with AI-assisted code leaking secrets at double the rate.

Agents are not static applications. A traditional OAuth client has a fixed set of capabilities registered in advance. However, an AI agent behaves very differently. It discovers resources at runtime, chains tool calls across services mid-task, and may invoke tools that didn’t exist when the token was issued. So, a single agent task might require OAuth for Google Drive, an API key for a data warehouse, a different API key for an LLM, and another OAuth scope for email. Each hop crosses a trust boundary with a different credential type, a different lifetime, and a different audit trail.

And the token itself is the wrong primitive for the job. An OAuth token is a bearer credential: anyone who possesses it can use it, with no cryptographic binding to the entity presenting it. A stolen token works exactly as well as a legitimate one. Permissions are fixed at issuance and cannot be narrowed at runtime. When the Context.ai database was compromised, the attacker didn’t need to impersonate anyone. They just used the token. It worked because it was designed to work for whoever holds it.

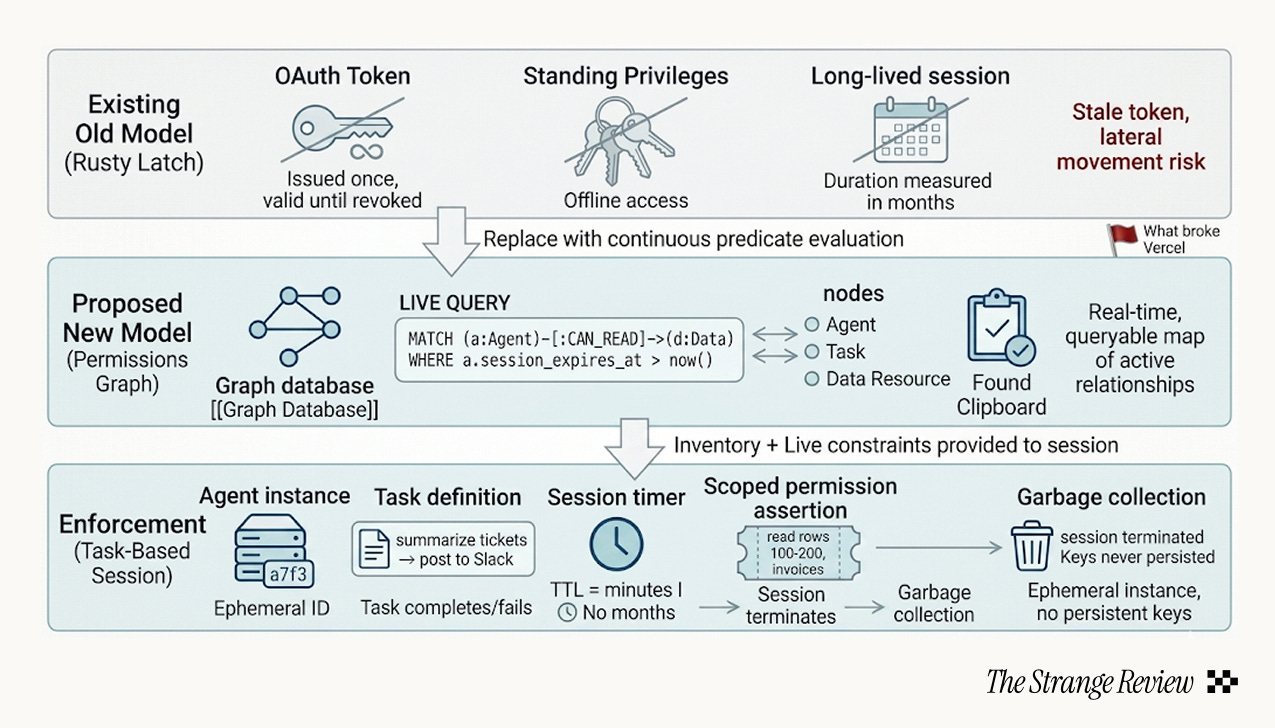

The installed base of OAuth tokens sitting in databases at thousands of companies right now were all issued under the old model. Static, over-scoped, bearer credentials with no agent identity, no delegation chain, no runtime evaluation, and no enforced expiration.

The Vercel breach is not an edge case. It is an expected outcome of the current architecture.

What to Fix

Most enterprises cannot tell you how many AI agents are connected to their systems, what permissions they hold, or which OAuth apps they’ve authorized. A 2026 survey found that only 24.4% of organizations have full visibility into their agent landscape. Today, the average enterprise runs 37 deployed agents, and 74% plan to deploy more within two years. The gap between whale acquisition and whale tracking is a canyon.

I would argue that the fix is not better token management. The fix is eliminating the standing token entirely.

From Rusty Latch to Revolving Door: A New Permission Model?

Three things need to change.

First, agents should not hold persistent credentials. Sessions should be scoped to individual tasks and expire when the work is done.

Second, enterprises need a live map of every agent, every token, every permission grant, queryable in real time, not a quarterly audit.

Third, the identity model needs to distinguish between the human who delegated a task and the agent that executed it.

Today, an OAuth token is a state: issued once, valid until revoked. In a new permissions model, permission could be ephemeral. The agent doesn’t carry a master key ring or receive a long-lived identity. Instead, when a task is initiated (”summarize the last three support tickets and post to Slack”), the system spawns an ephemeral agent session. That session is granted a narrow set of permissions scoped to exactly the resources the task requires, with a time-to-live measured in minutes, not months.

The system re-evaluates those permissions every time the agent acts. When the task completes, the session terminates. The keys are not returned; they never existed as persistent objects.

The whale does not keep the key ring. The whale is handed a single, time-locked key at the tank entrance, and the key dissolves when the whale swims out.

This eliminates the two failures that made the Vercel breach possible.

Stale tokens become impossible because there is no token to go stale; the agent lives exactly as long as the work.

Lateral movement is capped: an attacker who compromises a session gets a sandboxed view of one task, not a master key to the entire Workspace.

Next, the enforcement layer needs a discovery layer underneath it: a real-time permissions graph that maps every active relationship between agents, tasks, and data.

This is just not an audit log (which tells you what happened) but a live inventory of what can happen right now. Which sessions are active, attached to which agent instance, with what scopes, granted by whom. When an employee leaves or stops using a tool, the graph shows every agent holding permissions that trace back to that person’s grants.

The Vercel breach was inevitable under the old model. Under a task‑ and session‑based model, the attack surface shrinks dramatically: the token would have expired long before the attacker could use it, and even a compromised live session would have exposed a single task, not the entire Workspace.

This Is Not a Case Against Whales

The argument here is not that AI agents are too dangerous to deploy. The argument is that we have no system for tracking the keys we’ve handed them. You don’t solve the Killer Whale Problem by locking the whale in a tiny tank. You solve it by building a better clipboard.

I hope that the Vercel breach is a keystone incident that forces regulators (and cyber insurers) to start asking a question they haven’t been asking.

By the end of 2026, the standard cybersecurity questionnaire should contain a specific, painful line item: “Provide a complete inventory of all OAuth 2.0 grants with offline_access scopes issued to third-party AI/LLM applications in the last 12 months.”

Companies that cannot answer that question will find themselves in difficult conversations with their customers.

The Vercel breach already happened. The MCP vulnerability is already public. The whales are in the water, they have the keys, and nobody is holding the clipboard.

Joy Yang is a Strange Research Fellow. She is pursuing computer science and government at Oxford, and is a researcher with its Visual Geometry Group. She was previously an intern with OpenAI and Google.

Brilliant analysis!