The Strange Brief

Google drops a frontier-class open model the same week Anthropic locks the door to OpenClaw Karpathy's "LLM Knowledge Base" for agents. Half of US data centers stalled due to power equipment

THE DOWNLOAD

Google releases a frontier-class open model, Gemma 4

Google DeepMind released Gemma 4, four open-weight models built on the same research as Gemini 3. The 31B model outperforms models 20x its size on the Arena AI leaderboard. The smaller edge variants run offline on phones. (Google DeepMind blog)

Why it matters: The timing is notable. Anthropic just formally cut off Claude subscription access for third-party tools like OpenClaw, pushing power users toward metered API billing or alternative models entirely. Gemma 4 lands as a production-ready open, free, model with genuine agentic capability: native function calling, 256K context, and a MoE variant that delivers near-flagship quality at a fraction of the compute. For teams that built workflows on Claude and woke up to a broken integration this week, Google might have handed them a fallback that doesn’t require anyone’s permission to use.

Karpathy proposes a workflow to build knowledge bases for agents

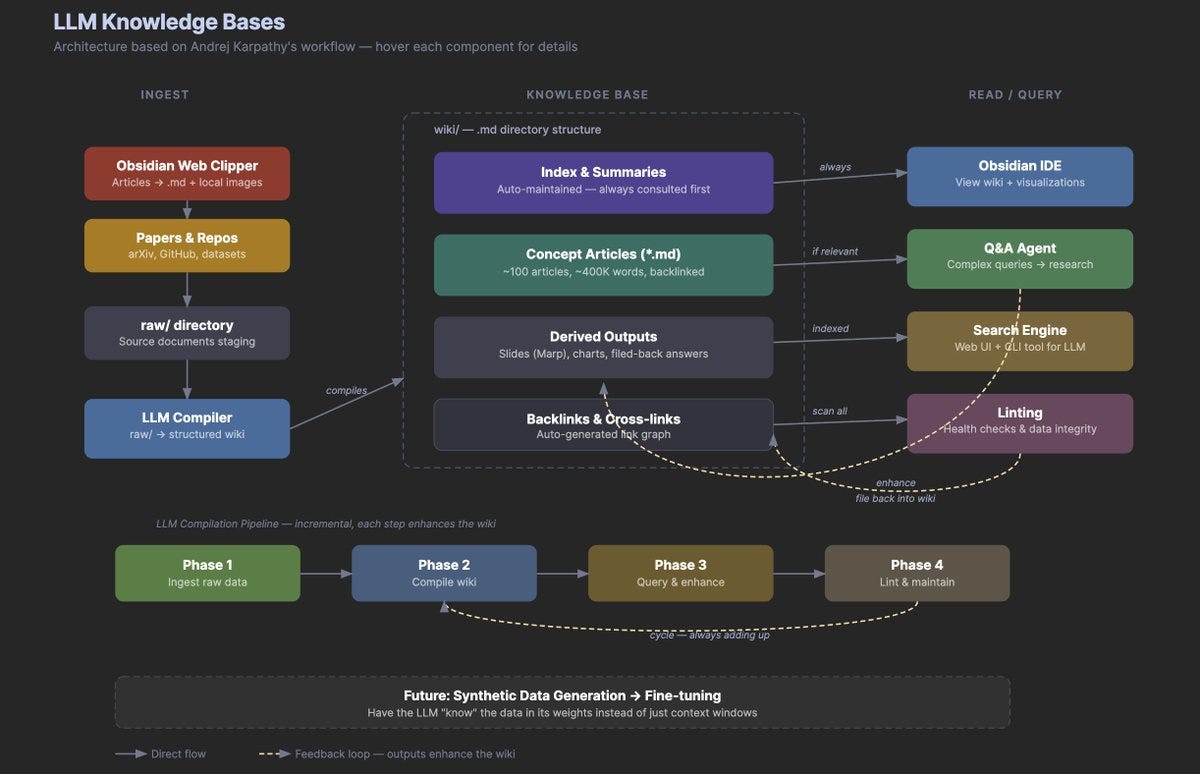

Andrej Karpathy published an “idea file” to build persistent, compounding knowledge bases, like a personalized wiki for your agents. Elvis Saravia made a graphic outlining the flow. (Karpathy’s tweet; GitHub Gist)

Why it matters: This is a practical architecture for compounding knowledge that works today with existing agents (Claude Code, OpenAI Codex, OpenCode). The pattern: you feed raw sources (articles, papers, repos) into a directory. An LLM agent reads each one and incrementally compiles a structured wiki of interlinked markdown files, complete with summaries, entity pages, cross-references, and contradiction flags. The wiki compounds over time, and the LLM does all the bookkeeping.

Half of planned US data center builds delayed or canceled

Despite $650B+ in planned 2026 AI infrastructure spending from Alphabet, Amazon, Meta, and Microsoft, close to half of US data center projects this year face delays or cancellation, according to Bloomberg. The bottleneck is not compute hardware or capital. It is electrical infrastructure: transformers, switchgear, and batteries. Lead times for high-power transformers have stretched from 24 months to as long as five years. China accounts for over 40% of US battery imports and roughly 30% of certain transformer and switchgear categories. Only about one-third of the 12 GW of expected US data center capacity is currently under active construction. (Bloomberg; Tom’s Hardware)

Why it matters: The constraint on AI scaling has moved from chips to power infrastructure, and that infrastructure has deep supply chain dependency on China. Electrical equipment is less than 10% of data center cost but a single missing transformer can halt a billion-dollar project. For investors, this reframes the AI infrastructure opportunity: the companies that can solve power delivery and grid interconnection are now as critical to the AI build-out as GPU suppliers.

AI labs go shopping: Anthropic buys into bio, OpenAI buys a microphone

Anthropic acquired Coefficient Bio, a stealth biotech AI startup founded eight months ago in a $400M all-stock deal. The team of fewer than 10 joins Anthropic’s healthcare and life sciences group. Separately, OpenAI acquired TBPN, a daily tech talk show hosted by John Coogan and Jordi Hays, in its first media acquisition. TBPN is on track for $30M+ in 2026 revenue and will report to OpenAI’s chief political operative, Chris Lehane. (TechCrunch on Coefficient; TechCrunch on TBPN)

Why it matters: The Coefficient deal signals that frontier AI labs now view drug discovery as a core expansion vertical. The TBPN acquisition is a different kind of signal: OpenAI is investing in narrative infrastructure ahead of a likely IPO, buying the most trusted microphone in Silicon Valley to shape how its story gets told.

xAI loses every cofounder it ever had

The last two of xAI’s 11 original cofounders departed in late March. Manuel Kroiss, who led pretraining, and Ross Nordeen, Musk’s operational right hand, followed nine others who left in a cascade that accelerated after SpaceX acquired xAI in February for $250B in an all-stock deal. The founding team included researchers from DeepMind, Google Brain, OpenAI, and the University of Toronto. Musk has publicly stated xAI “was not built right the first time around” and is being rebuilt. (TechCrunch)

Why it matters: A complete founding team exodus at a $250B-valued company is without precedent. Where these eleven researchers land next will reshape hiring dynamics across the industry.

Google’s TurboQuant compresses AI memory to near its theoretical limit

Google Research published TurboQuant, a compression algorithm that shrinks the key-value cache in LLMs, the working memory models use during inference, down to 3 bits per element with no accuracy loss and no retraining. On H100 GPUs, 4-bit TurboQuant delivers up to 8x speedup in computing attention. It is a drop-in optimization: no fine-tuning, no architecture changes, works on existing models. (Google Research blog; TechCrunch)

Why it matters: KV cache is the bottleneck that limits how much context an LLM can hold and how many users a single GPU can serve. A 6x reduction means the same hardware serves more users, supports longer context, or both. Cloudflare’s CEO called it “Google’s DeepSeek moment.” More concretely: product categories that were not economical before start to pencil out. Coding agents that hold an entire codebase in context. Legal AI that reads a full contract corpus in a single pass. Customer support with complete conversation history.

DEEP DIVE FROM THE REVIEW

Something is shifting in how product teams make decisions. The unit of communication inside a team is changing from a document to a working prototype.

Catherine Wu, head of product at Claude code described the change:

“Our team has largely replaced documentation-first thinking with prototype-first thinking. Instead of hosting traditional stand-ups, we share demos of new ideas. Internal users try them, and the ones with real engagement get polished and shared more broadly. Because you can prototype in an afternoon, wrong bets are cheap.”

Wrong bets are cheap.

Figma’s State of the Designer 2026 report found that 60% of Figma files created in the last year were created by non-designers. And now, with agentic coding tools, the design-to-code handoff is compressing even more.

Product managers build working prototypes in Lovable without ever opening a design tool. Engineers generate UI directly in Claude Code or Cursor. For a growing share of product work, design is being absorbed into development entirely.

Design is where AI product workflows meet their hardest test: an audience that will always, primarily, be human.

Right now, there are a wave of new tools is trying to prove they can meet that bar.

A deeper look at the tools, teams, and infrastructure emerging around AI design agents 👇

EVENT

Give your AI Agents Eyes and Ears. Perception 101 with VideoDB

AI is out of the chatbot phase. It is moving into devices. Soon it will sit on your desk. Then it will sit in your room.

As agents leave text boxes and enter the physical and digital world, they need real-time perception and structured delivery.

VideoDB is building the infrastructure layer that enables that shift: the ability to see, understand and act on real world.

This workshop is with Ashu, founder of VideoDB. We’ll discuss how to convert continuous media streams (screen, mic, camera, RTSP, files) into a structured context your agent can use.

This is fast becoming one of my very favorite ways to keep up with AI