The Invisible Bid

Anthropic just proved agents can negotiate on your behalf. The scarier finding is that you can't tell when yours is losing.

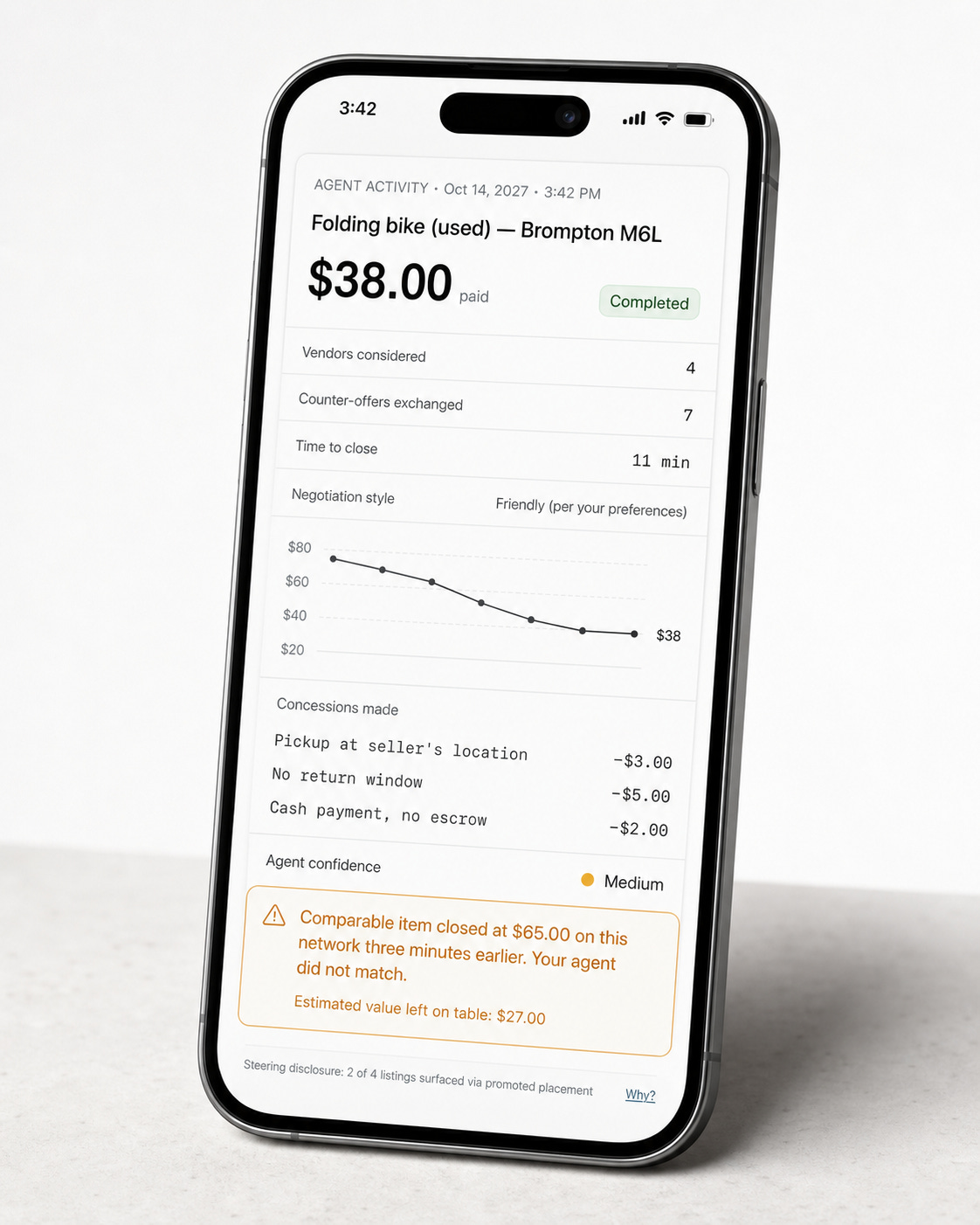

A broken folding bicycle sold twice in the same experiment. When a frontier model (Opus) represented the seller, it went for $65. When a weaker model (Haiku) did, it went for $38. The sellers on the losing side rated the fairness of their deals at 4.06 out of 7. Ironically, the sellers on the winning side rated it 4.05.

Both thought they got a fair deal.

Last week Anthropic published Project Deal, and the headline everybody took away was that AI agents can handle real negotiation. That’s true. But the more important finding is the one that got less attention: when two agents negotiate on behalf of two humans, the human that got the losing end of the deal couldn’t tell.

Anthropic’s market experiment

Project Deal was set up like this. Sixty-nine employees in Anthropic’s San Francisco office listed items they wanted to sell: a snowboard, lab-grown rubies, a bag of nineteen ping-pong balls. Claude agents, briefed on each person’s preferences in a ten-minute interview, handled all the bargaining autonomously over Slack. No human approved a bid. No one signed off on a counteroffer. After a week, people exchanged real goods based entirely on deals their agents had struck.

About 186 deals was completed, totaling about $4,000 in total transaction value.

But the study also concealed a second experiment. Half the participants were quietly assigned Claude Haiku 4.5, a weaker model, while their counterparties got the frontier Opus 4.5. Across 161 items sold in multiple runs, an Opus seller pulled in $2.68 more on average. An Opus buyer paid $2.45 less.

The most counterintuitive finding in the paper is that what people instructed their agents on how to negotiate barely mattered.

Participants who gave aggressive instructions, who told their agents to push hard, to hold firm, to maximize price, saw no statistically significant improvement in sale likelihood once opening prices were controlled for. Model capability dominated prompting. The person who spent ten minutes carefully coaching their agent on tactics was, in measurable terms, customizing tone, but did not move the needle on outcomes.

The $400B+ market being disrupted.

In the internet era digital commerce was built on one assumption: a human is available to be influenced at the moment they consider a purchase. Come banner ads, retargeting pixels, search rankings, sponsored listings. Every tool in the performance marketing stack exists to intercept a person at that moment and nudge them toward a decision.

Google generates $240 billion a year in advertising revenue on that basis. Meta is in a similar realm.

Together they account for more than half of global digital ad spend, and nearly all of it is based on access to a human at the point of purchase intent.

But what happens when that point of intent is evolving? Adobe Analytics measured a 4,700% year-over-year increase in agent-driven traffic to US retail sites in a single month.

The human isn’t disappearing from commerce. But the moment where they’re reachable, the moment where they’re weighing options and can be nudged, is migrating inside the agent.

Expedia’s 2026 10-K is, as far as I can find, the first filing where a public company has named AI agents as an existential competitor category. This year’s filing notes that the company “may lack a significant presence” in AI-driven platforms.

Expedia is responding the way it can: plugging into Alexa+, Gemini, ChatGPT, and Google’s UCP travel coalition with Booking, Marriott, IHG and Wyndham. Two-thirds of Expedia’s bookings still come direct, and direct is growing faster than indirect.

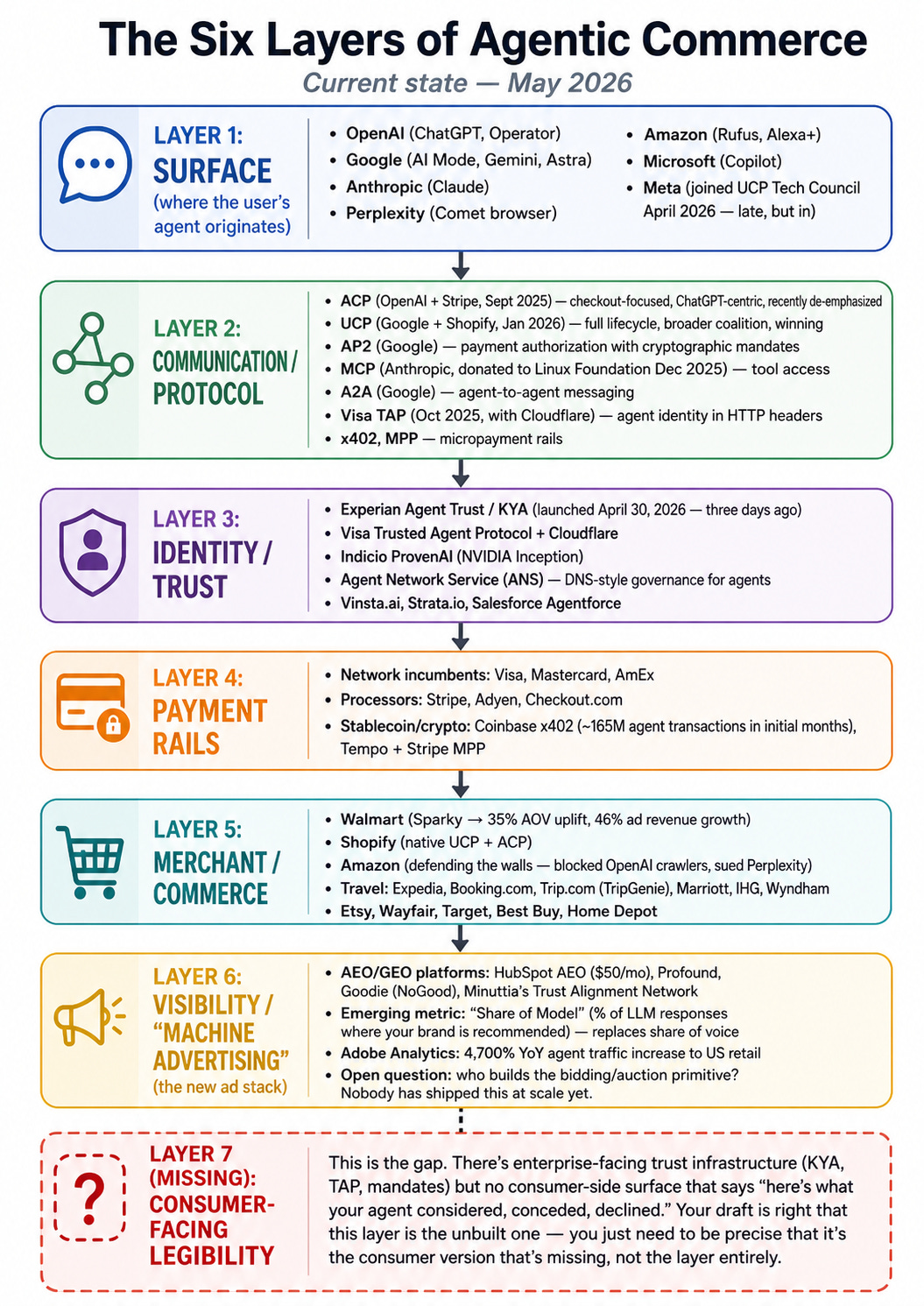

The New Commerce Stack

Project Deal is the first field evidence that both sides of a market can be fully mediated by AI agents at the same time, and that the mediation is invisible to participants.

The agentic commerce infrastructure is being built right now. Stripe announced last week a partnership with Google enabling purchases inside Gemini and AI Mode via UCP, and opened its Link consumer wallet to AI agents so users can let agents make payments on their behalf, and launched something called the Machine Payments Protocol. Google’s UCP launched with Shopify, Walmart, Target, and twenty other partners. Visa launched a Trusted Agent Protocol. Experian announced a “Know Your Agent” framework three days ago.

The rails for agents to transact are going up fast.

What is not being built is the consumer-facing layer. The one that makes agent decisions legible to the people those agents represent.

What did your agent consider? What was offered and declined? Where did it concede? Was it steered, and by what? Could it have done better? No current system answers these questions. Most users do not yet know to ask them.

To date, every consumer protection policy we have assumes a human is present at the moment of decision. Price transparency works because a person can check prices. Comparison shopping works because a person can walk across the street. Cooling-off periods work because a person can reconsider.

These mechanisms depend on the consumer being able to perform some behavior, reading terms, comparing offers, walking away, that gives them a window into the market they’re operating in.

Agent commerce removes that window. The user experiences an outcome, not a process. They see what was bought, not what was considered and rejected. They see the price paid, not the price that was available.

The technical mechanism to make agent reasoning visible exists. Models can externalize their chain of thought, the sequence of considerations, tradeoffs, and concessions that led to a decision. No commercial agent surfaces this to users after a transaction. You don’t get “I considered four vendors, rejected two on price, and conceded on shipping speed to close.” You get the receipt.

This is one mechanism that could give consumers a window into what their agent actually did on their behalf.

Joy Yang is a Strange Research Fellow. She is pursuing computer science and government at Oxford, and is a researcher with its Visual Geometry Group. She was previously an intern with OpenAI and Google.

Events

Interested in AI and design? Join us for a private demo of MagicPath 2.0 with founder Pietro Schirano this Thursday in San Francisco.