The Design-Build Loop

Design is where AI product workflows meet their hardest test: an audience that will always, primarily, be human. A look at the tools, teams, and infrastructure emerging around AI design agents.

Every layer of the modern software stack has been reshaped by AI in the last eighteen months. Agents write backend logic, generate tests, deploy infrastructure, manage databases. Most of this work has an audience of machines. Servers talk to servers. APIs talk to APIs.

But the interface, the button, the card, the spacing between a label and its input field, has an audience that will always, primarily, be human. As long as humans are the user, the bar for what counts as good design is subjective, contextual, culturally dependent, and it moves every time a competitor ships something better or the cultural vibes shift.

It’s hard to write a spec for taste and to delight.

For me, that makes design the most revealing test of whether AI product workflows actually work in enterprise settings. If agents can ship UI that passes the taste test, that feels intentional and consistent and considered, the implications for how product teams organize themselves are enormous.

And right now, a wave of new tools is trying to prove they can.

How PDE Teams Are Re-Organizing Themselves: The Prototype-First Team

Something is shifting in how product teams make decisions. The unit of communication inside a team is changing from a document to a working prototype. (I love and champion this change, personally).

Cat Wu, head of product at Claude code described the change:

“Our team has largely replaced documentation-first thinking with prototype-first thinking. Instead of hosting traditional stand-ups, we share demos of new ideas. Internal users try them, and the ones with real engagement get polished and shared more broadly. Because you can prototype in an afternoon, wrong bets are cheap.”

Wrong bets are cheap.

This points to a structural change in how organizations allocate attention. When prototyping costs an afternoon instead of a sprint, the entire approval apparatus that exists to prevent wasted engineering cycles (the PRDs, the design reviews, the ticket grooming, the estimation poker) becomes overhead. You don’t need a PRD to justify building something that takes four hours. You build it, you demo it, you watch what happens.

Figma’s State of the Designer 2026 report found that 60% of Figma files created in the last year were created by non-designers. And now, with agentic coding tools, the design-to-code handoff is compressing even more.

Product managers build working prototypes in Lovable without ever opening a design tool. Engineers generate UI directly in Claude Code or Cursor. For a growing share of product work, design is being absorbed into development entirely.

Three Approaches to the Design-Build Loop

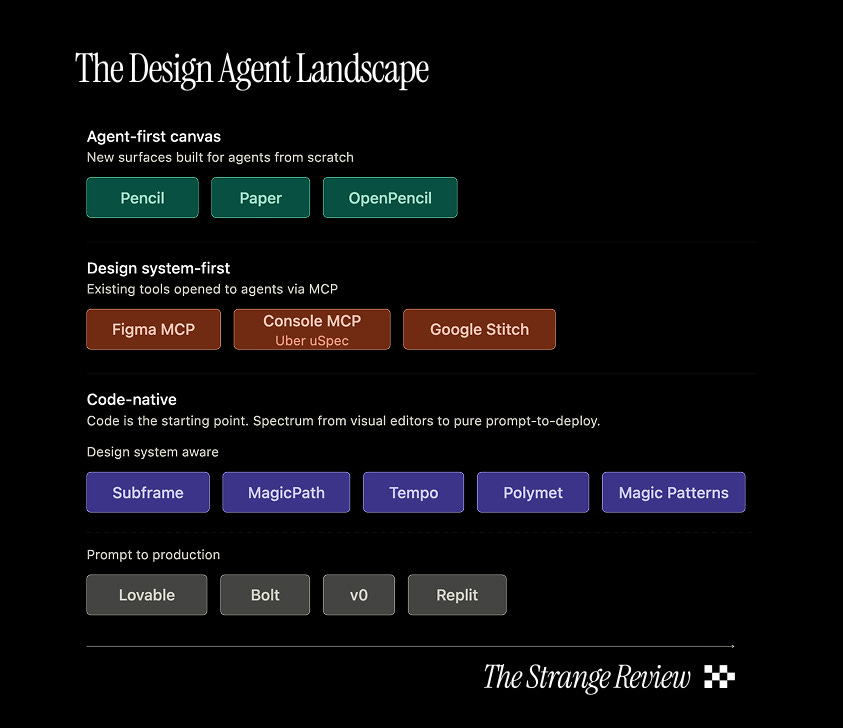

Over the past weeks, we tested more than a dozen design agent tools across the full spectrum of what’s available in 2026, from AI-native canvases to Figma MCP plugins to prompt-to-app builders. The landscape clusters into four distinct philosophies, each starting the design-to-code loop at a different point and each building one direction well.

What we found is that most tools struggles with the return trip (for instance, from engineering back to design). Every product builder knows that iteration is fundamentally a big part of product work.

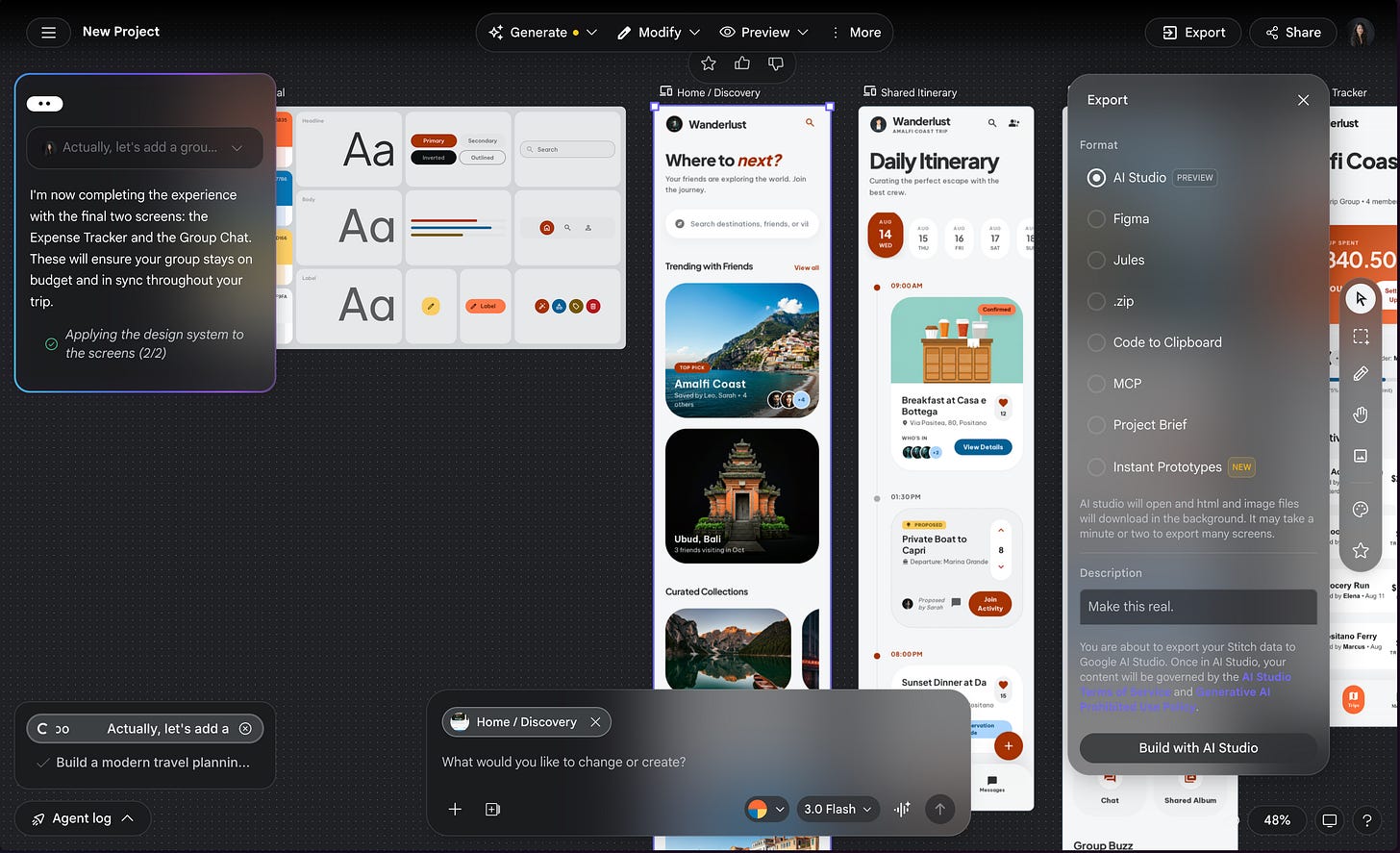

Agent-first canvas

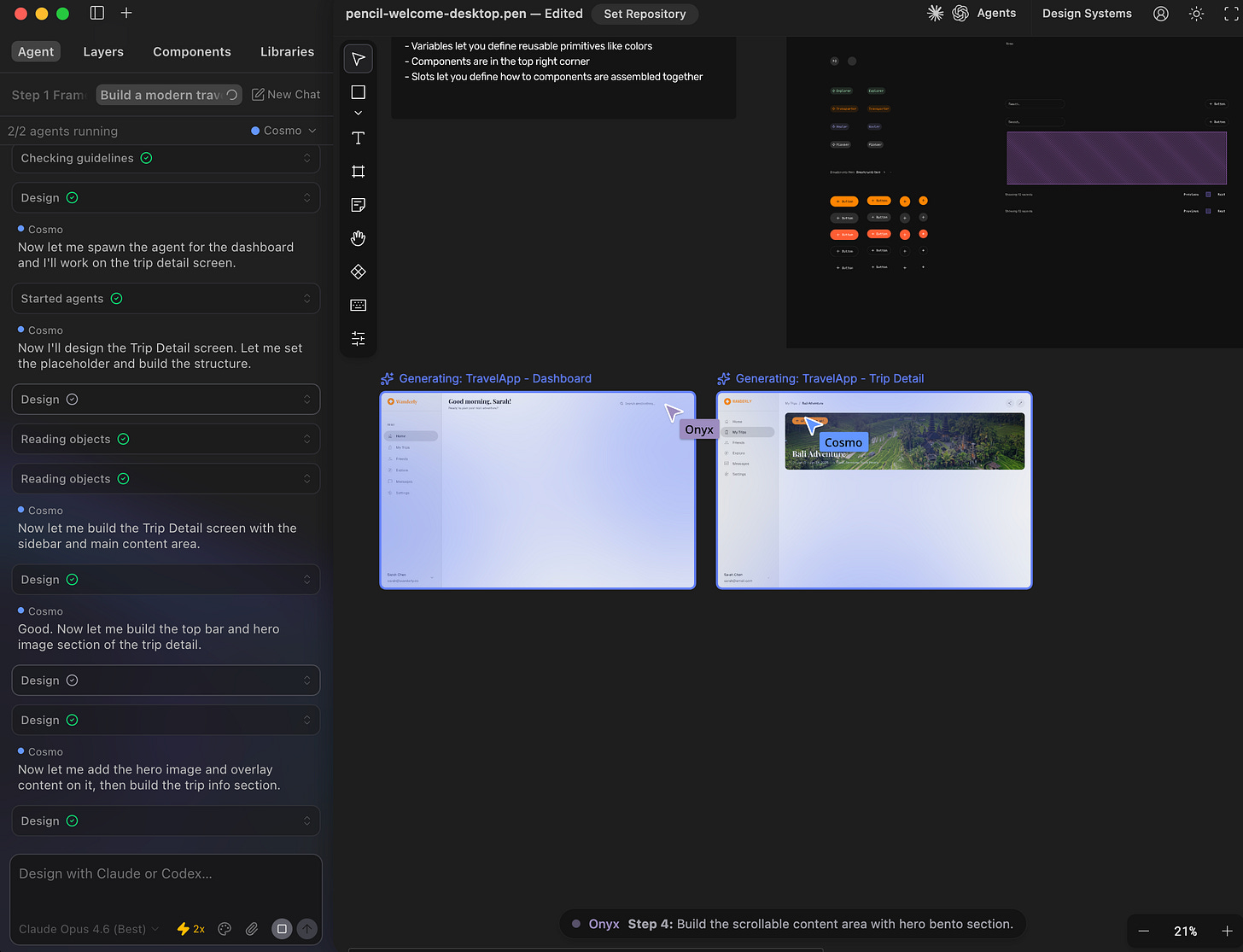

This approach rebuilds the design canvas from the ground up for agents, rather than bolting AI onto an existing tool. The canvas is the primary surface, and agents are first-class collaborators on it.

Pencil is the most visible example, with up to six AI agents working simultaneously on an infinite canvas inside your IDE, each with its own cursor. Paper takes a different angle, storing designs as actual HTML and CSS so there’s no translation layer between what you see and what the code says. OpenPencil offers an open-source alternative that reads native Figma files.

These tools sync design to code well (Pencil through Git-native .pen files, Paper through its code-native canvas), and some can import code changes back. But the sync runs through version control or file export, not as a live round-trip. You commit, you pull, you see the update. The iteration cycle has a seam in the middle.

And the trade-off is ecosystem: Figma has 95% of Fortune 500 companies and a decade of design system infrastructure built on its platform. These tools have early-access users and ambition.

Design System-First

This approach keeps the existing design tool as the center of gravity and opens it to agents through protocol-level access. The design system, already built and maintained by the team, becomes the instruction set agents follow.

Figma's MCP server, which launched full read/write canvas access on March 24, 2026, is the defining example. Agents can now create components, apply variables, and modify auto-layout using your existing design system. The key innovation is Skills: markdown files that encode your team's conventions and teach agents how to work in Figma.

Before Figma shipped its official server, an open-source alternative (Figma Console MCP, built by Southleft) had already enabled the same kind of access with 90+ tools, and this is the one Uber's design systems team built on.

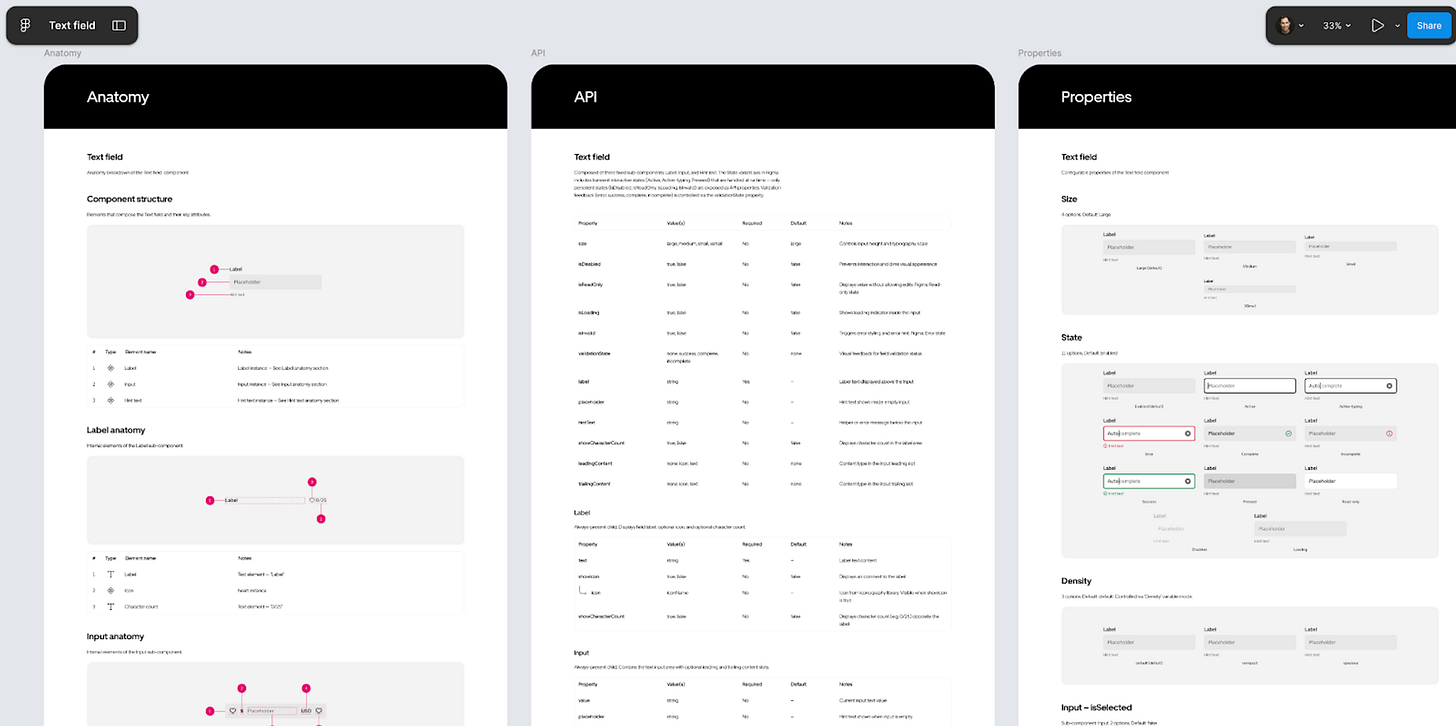

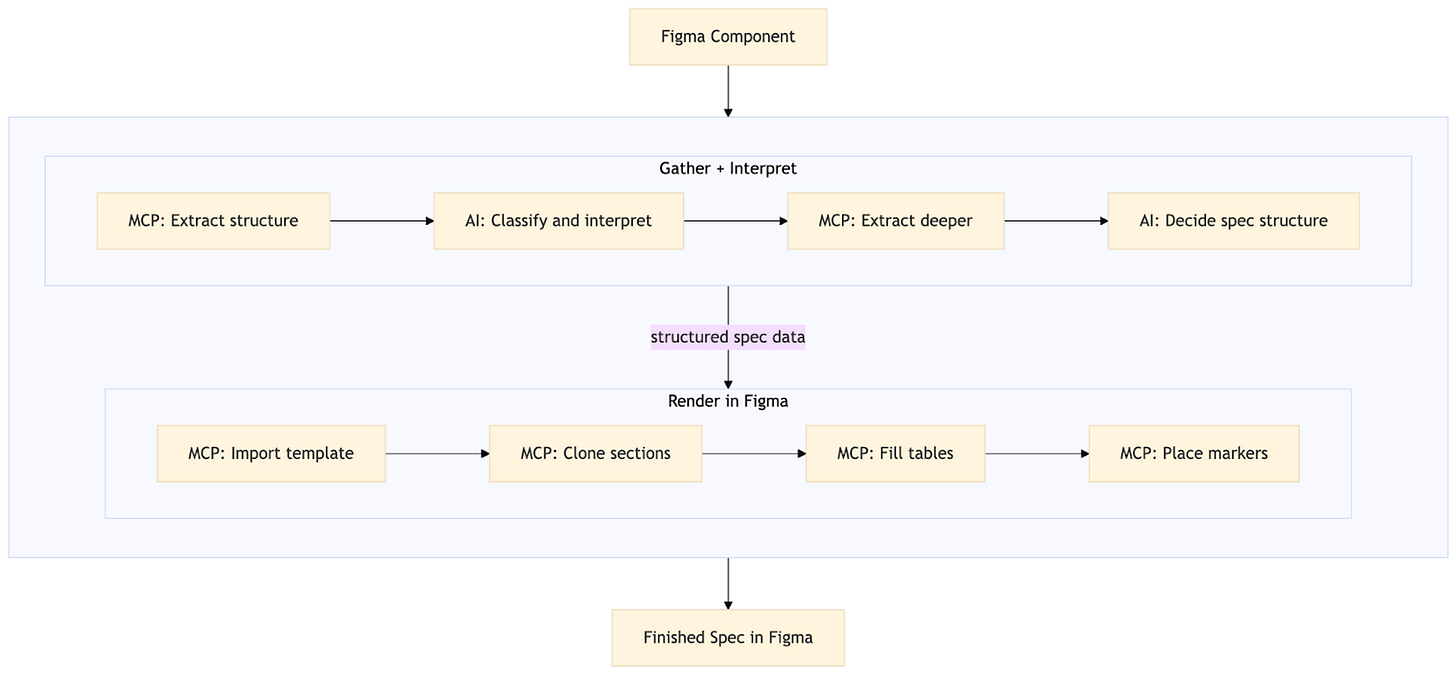

Uber’s uSpec system connects an AI agent in Cursor to Figma through the Console MCP, crawls the component tree, extracts tokens and styles, and renders finished spec pages directly in the Figma file. What took weeks per component now takes minutes across seven implementation stacks. Uber open-sourced the whole thing at uspec.design.

Google Stitch takes a parallel approach with its DESIGN.md format, a portable markdown file encoding design rules that any agent can read. Its tight integration with AI Studio makes it seamless to build out the rest of the stack like integrating a database or real-time APIs.

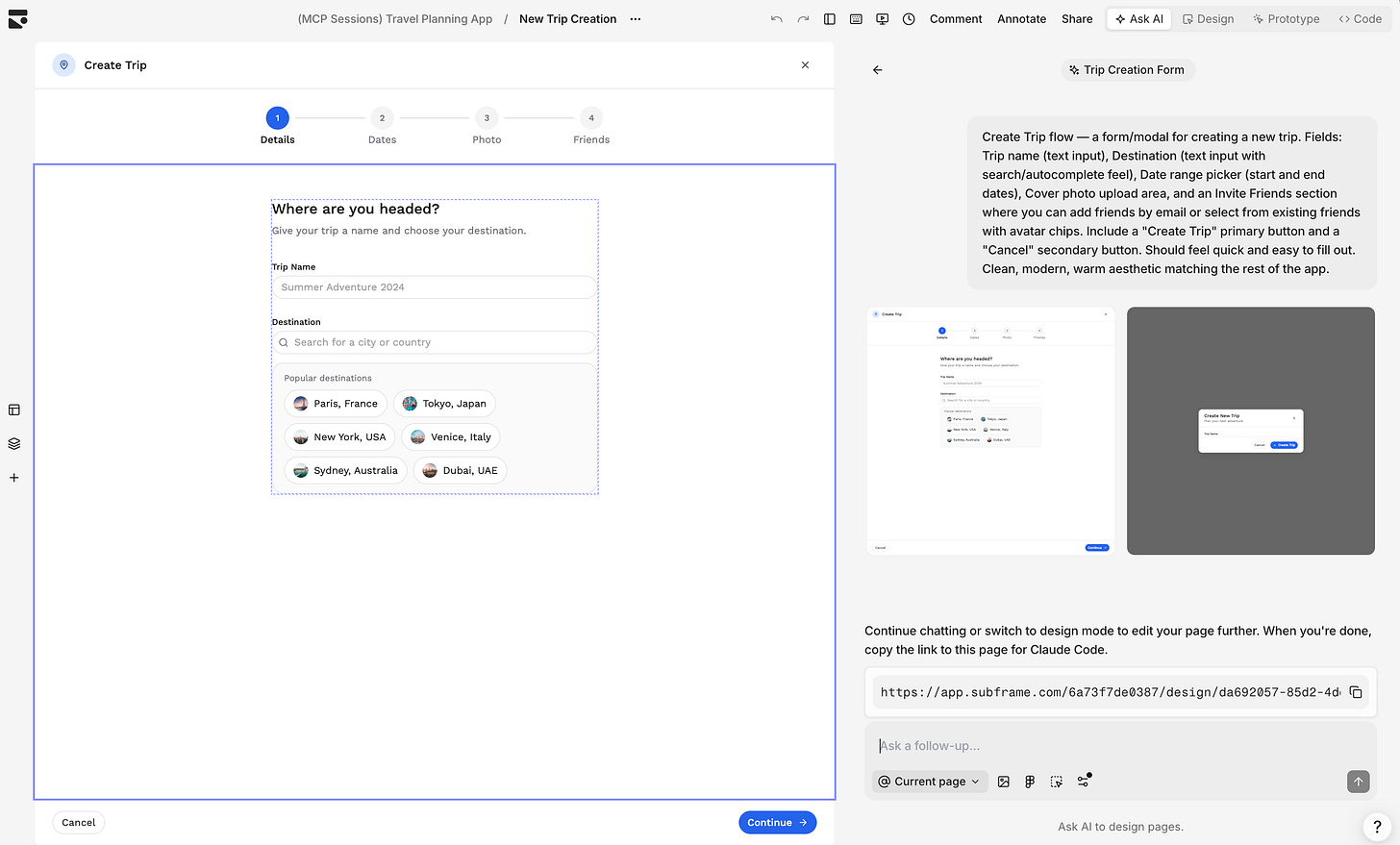

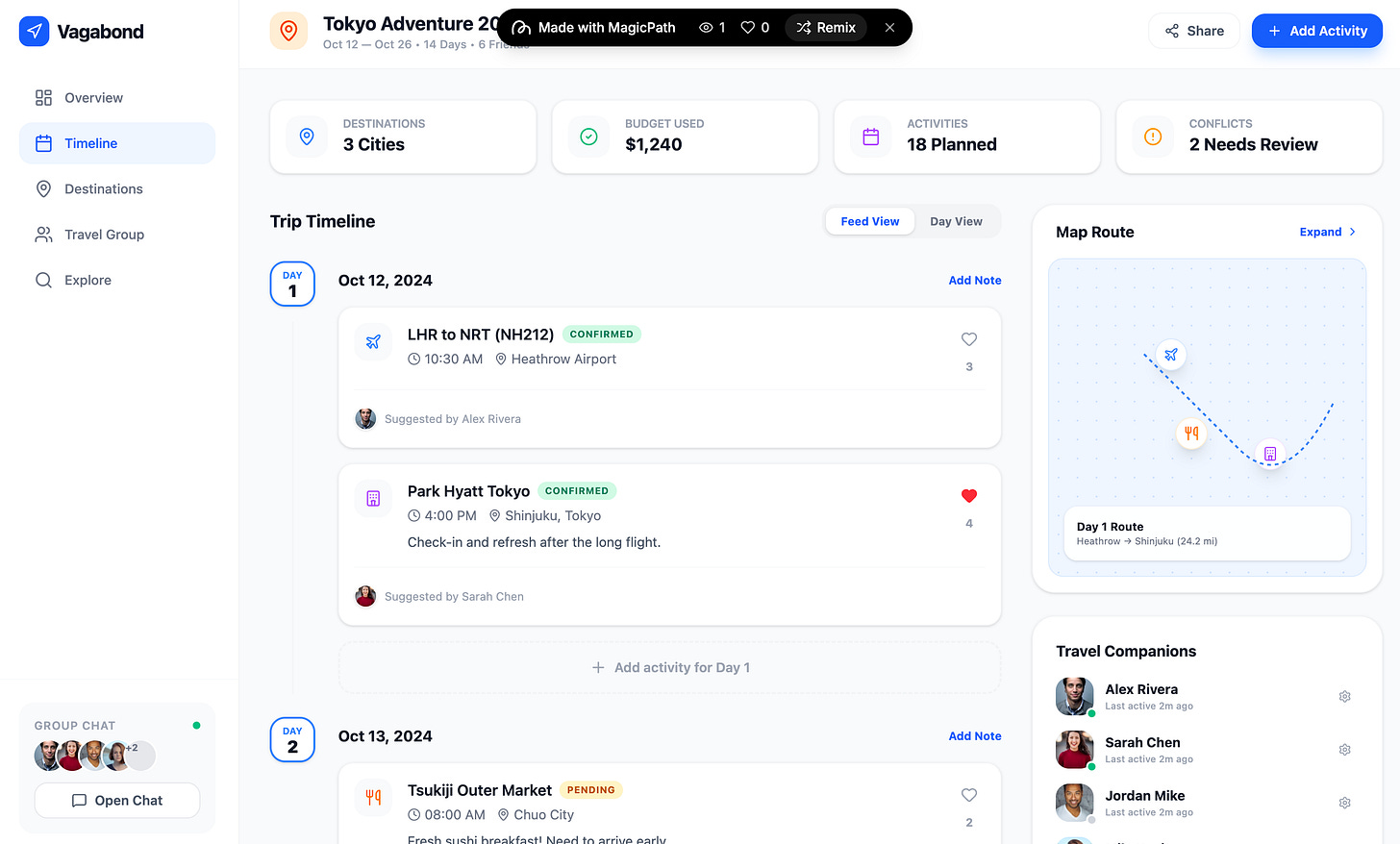

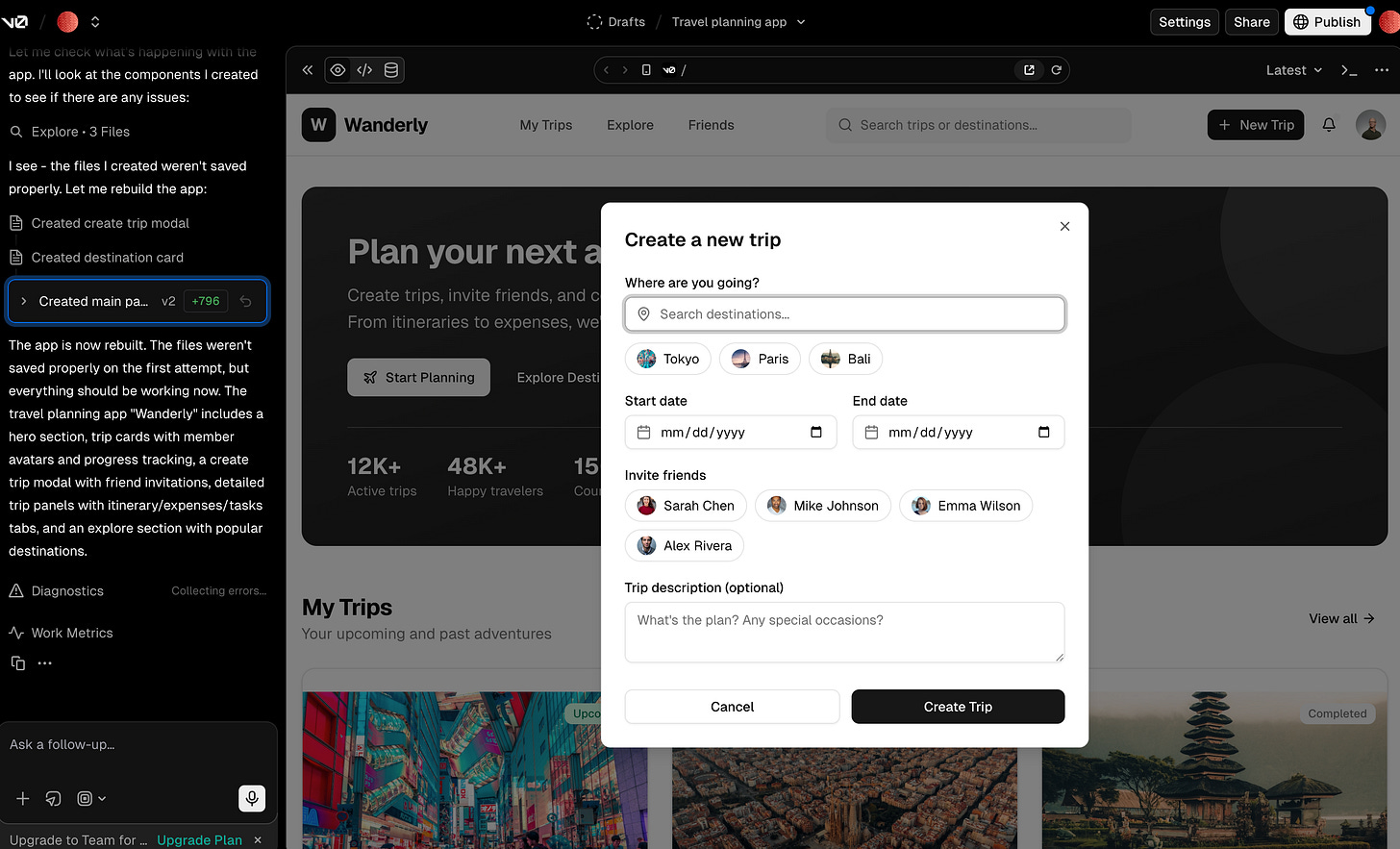

Code-first Platforms

This is the broadest category, and it runs a spectrum from tools with full visual editors to tools with no visual surface at all.

On one end, tools like Subframe, MagicPath, Tempo, and Polymet give you a visual editor built on top of real production code. In Subframe, a coding agent can push targeted edits that show up in the editor immediately, and you switch freely between design mode and code mode. MagicPath anchors on the design system with Figma token import and an infinite canvas for exploring variations. Tempo takes a more formal approach, generating a PRD first, then wireframes, then code. These tools care about your design system, and it shows in the output.

On the other end, Lovable, Bolt, and v0 collapse the design phase into development entirely. Describe what you want, get a working app, deploy it. Lovable reached $200 million in annual recurring revenue within twelve months. Bolt went from zero to $40 million ARR in six months. v0 has more than six million developers. These are production tools that happen to produce UI, and for internal tools, prototypes, and demos, that is often exactly what you need.

The gap between the two ends of this spectrum became clear in testing. The tools that generate within a defined design system produce output that stays consistent across iterations. The tools that generate from scratch every time converge on a recognizable sameness: clean, competent, generic.

Design consistency drifts the moment you start iterating.

The Design System Is the Moat

In working with these tools, one insight emerged for me: the tools that understand your design system produce better output than the ones that don’t.

This has a non-obvious implication. The competitive moat in this market is not generative quality, which is commoditizing fast. The moat is the design system graph: the tokens, components, spacing scales, typography rules, and conventions that make your product look like your product and not a generic template.

Whoever makes that system machine-readable for agents will win the enterprise.

The Roles Are Evolving, Not Disappearing.

The design role is not disappearing. Neither is the frontend engineering role, or the product management role. But the jobs to be done within each of those roles are shifting in ways that are already visible.

Cheng Lou, an influential frontend developer and core contributor to the React ecosystem, recently released Pretext: a pure JavaScript library that measures and lays out multiline text without touching the browser’s DOM. Lou’s argument is that 80% of the CSS spec could be avoided if developers had better control over text, and that AI “alleviates the need of having more hard-coded CSS configs.”

Lou is not building a design agent. He’s building infrastructure that makes design agents reliable, the verification layer that lets an agent know whether its output is correct before anyone opens a browser.

That’s what the frontier of frontend engineering looks like: building the systems that let machines do it verifiably.

The same shift is happening in design. At Uber, Ian Guisard didn’t stop being a design systems lead when uSpec automated his spec-writing. His job shifted from producing documentation to encoding expertise, writing agent skills, defining validation rules, deciding what “correct” means for each component across seven platforms. The human became the system designer, not the system operator.

The canary is singing. And the song is about the work shifting from execution to judgment, from operating the system to designing the system itself.

Hey Tara, I found you through SheWritesAI. I love how this reframes the role of designers. Less about execution, more about judgment and intentionality, which feels like the part AI can’t replicate.🩷🦩