The Brief: The AI Factory Era Begins at GTC

Nvidia GTC went bigger on AI factories. Doordash now turns 8m dashers into physical data collectors. Google upgraded Vibe Coding and Vibe Design in the same week

The Download

Here’s the news that mattered this week

GTC 2026 Highlights

Jensen Huang's two-hour keynote centered on NVIDIA's transition from chip vendor to full-stack AI infrastructure platform. Three announcements stood out.

The Groq Acquisition Pays Off Immediately. Three months after a $20B acqui-hire, NVIDIA debuted the Groq 3 LPU, an SRAM-based inference accelerator that sits alongside Rubin GPUs in rack-scale deployments. 150 TB/s memory bandwidth versus 22 TB/s on Rubin’s HBM4. Huang suggested up to 25% of cluster compute could be Groq silicon. NVIDIA killed its own Rubin CPX product to make room. The inference economy now has dedicated hardware, and NVIDIA owns both sides of the training-inference split.

$1 Trillion Through 2027. Huang doubled last year’s $500B forecast, projecting $1 trillion in cumulative Blackwell and Vera Rubin orders through 2027. Goldman maintained a Buy rating, noting the guidance directly counters the “peak capex in 2026” thesis weighing on AI infrastructure names.

Runway Previews Real-Time Video Generation on Vera Rubin. Runway and NVIDIA demonstrated a new video model running on Vera Rubin hardware with time-to-first-frame under 100ms for HD video. The model feeds into Runway's General World Model (GWM-1) research. (Runway)

DoorDash Launches Tasks, Turns 8M Dashers Into Physical AI Data Collectors

What it is: DoorDash launched a standalone Tasks app paying couriers to film household chores, record multilingual speech, and capture real-world environments to train AI and robotics models. Partners span retail, insurance, hospitality, and tech. Over 2 million tasks completed since 2024. (TechCrunch, Bloomberg)

Why it matters: DoorDash just entered the physical AI data business with 8 million distributed workers already dispatched to real-world locations. Scale AI built a multibillion-dollar company on remote data labeling. DoorDash arrives with in-person collection at a distribution scale that might be hard for any data vendor to match.

Claude Code Channels: Agentic Coding From Your Phone

What it is: Anthropic shipped Claude Code Channels, allowing developers to control Claude Code sessions through Telegram and Discord via MCP. You can now monitor, prompt, and steer persistent coding agents from your phone.

Why it matters: Agentic coding has been tethered to the terminal. Channels makes it asynchronous and mobile, which changes the usage pattern from “sit down and code” to “delegate and check in.” This is their direct response to the runway success of OpenClaw.

Google Ships Vibe Coding and Vibe Design in the Same Week

What it is: Google upgraded AI Studio into a unified full-stack development platform, combining its Antigravity coding agent with Firebase backends, secret management, and one-click deployment to Cloud Run. Separately, it shipped a major Stitch redesign: AI-native infinite canvas, voice interaction, instant prototyping, and export to Figma and HTML/CSS. On this news, Figma shares dropped 4%. (Google Blog, Google Blog, SiliconANGLE)

Why it matters: Google now covers design, code, and deployment in a single ecosystem, all free at launch. When the model provider owns the full stack and bundles the tooling, standalone players in both vibe coding (Replit, Bolt, Lovable) and design (Figma) lose pricing power. Developer tools are a distribution game, and the hyperscalers have distribution locked in.

V-JEPA 2.1: LeCun’s World Model Architecture Posts New Robotics Benchmarks

What it is: Yann LeCun and collaborators (several now at AMI Labs) released V-JEPA 2.1, the latest version of the JEPA video model. It achieves state-of-the-art on action anticipation and object tracking benchmarks and posts a 20% improvement in real-robot grasping success over its predecessor. (arXiv)

Why it matters: This is the first concrete technical signal from LeCun’s camp since AMI Labs raised $1B on the JEPA thesis two weeks ago. The robotics results in particular matter: the model learns manipulation tasks from just 62 hours of unlabeled robot video, with no task-specific training or reward. If JEPA architectures can generalize physical skills from small data, the capital advantage of massive GPU clusters shrinks and the value of proprietary physical data (see: Mind Robotics) grows.

Mind Robotics Raises $500M Series A on Rivian Factory Data

What it is: Mind Robotics, a Rivian spin-off, closed a $500M Series A led by Accel and a16z. The company trains industrial robots using Rivian’s proprietary factory sensor data and custom silicon.

Why it matters: Industrial incumbents are discovering their operational data (the physics of how things move, break, and assemble) is as or more valuable than their products. This creates a new category of “data-rich” robotics startups where the moat isn’t hardware design, it’s access to high-fidelity physical interaction data. We expect more spin-outs from automakers and heavy manufacturers.

Broadcom Ships 400G Optical DSP at OFC 2026

What it is: Broadcom debuted Taurus at OFC 2026, the first 400G-per-lane optical DSP, enabling 1.6T and 3.2T transceivers purpose-built for the GPU clusters announced at GTC.

Why it matters: Compute is scaling faster than the network connecting it. As NVIDIA moves to rack-scale systems with tens of thousands of dies, the interconnect becomes the binding constraint. Broadcom is positioning as the chokepoint for all distributed AI training.

Deep Dive From The Review

NVIDIA’s latest AI rack, Vera Rubin, produces the heat of 160 homes. The next generation will double that. The one after will likely double it again.

The industry is racing to solve the heat problem, from subsea data centers to launching servers into orbit. But the most likely next step is the least exotic: liquid cooling.

The catch? The hardware is the easy part. The real cost is operational. It rewires how facilities are built, staffed, diagnosed, and run.

Our latest by Strange Research Fellow Rahul Narula explores what changes, and where the opportunity sits.

Strange Signals: Data of the Week

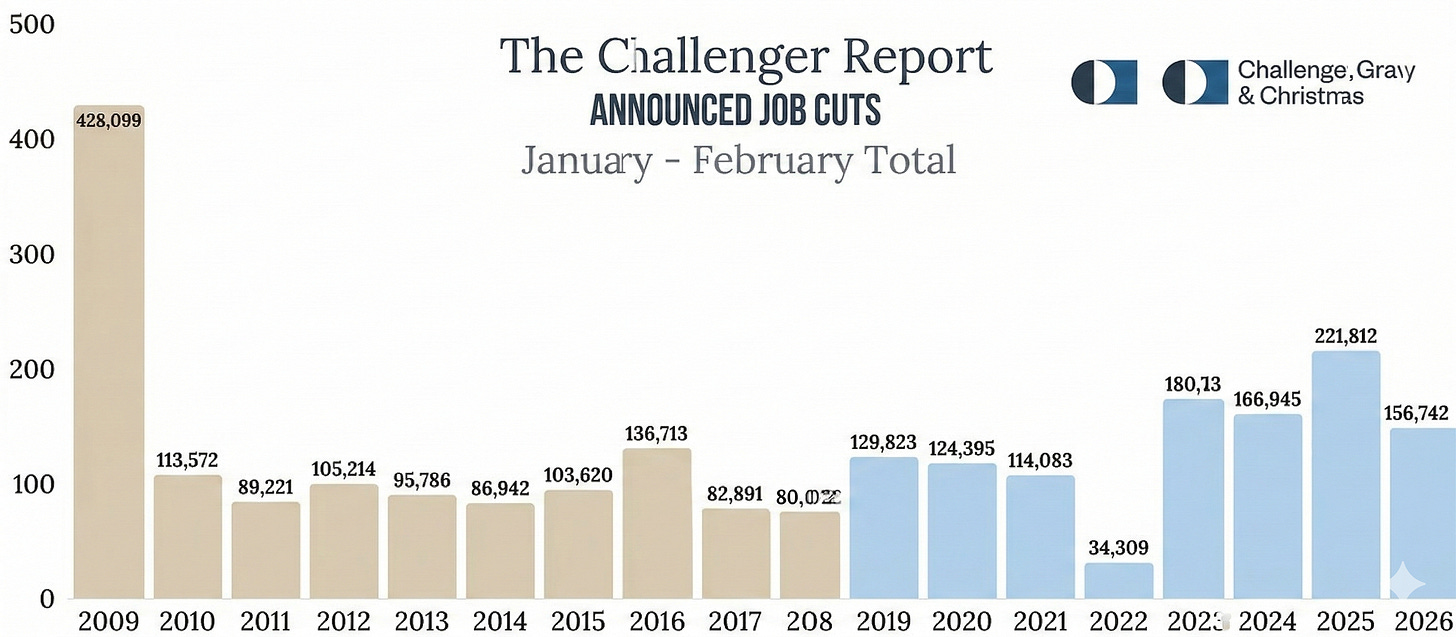

The numbers are adding up fast. In a survey of tech CEOs released this week, 66% reported they no longer plan to backfill roles lost to voluntary attrition. “Replacing departing staff with AI agents” entered the Top 5 strategic priorities for the first time. (SaaStr)

The layoff data supports it. Block cut 4,000 employees in February (40% of headcount). Atlassian cut 1,600 on March 11 (10% of staff, over 900 in R&D). Meta is reportedly planning to cut up to 20% of its 79,000-person workforce, roughly 15,000 roles, to offset $135B in AI capex. (TechCrunch, CNBC)

HR agency Challenger, Gray & Christmas’ data puts it in context: 12,304 job cuts have been explicitly attributed to AI through February 2026, 8% of all announced cuts. That’s up from 5% for the full year of 2025 and 3% since tracking began in 2023. Tech sector cuts are up 51% year over year. Meanwhile, announced hiring plans are down 56% compared to the same period last year, the lowest since tracking began in 2009. (Challenger)

We think companies might be entering a “low-hire, low-fire” era where headcounts shrink through unreplaced attrition and targeted restructuring rather than headline layoffs. Enterprise budgets are being redirected from headcount to AI tooling.