The Brief

The next generation will either work with agents, or work for agents. Plus: Google commits $40B to Anthropic, SpaceX options Cursor for $60B, and Chinese research dominated ICLR.

FIELD NOTES

The next generation of humans will either work with agents, or for agents.

I sat with this thought a lot this week, as someone bringing up two gen alpha kids, the first to grow up alongside AI, like I grew up alongside the internet.

The agentic world is spawning, and self-improving at a relentless pace. This week, OpenClaw creator Peter Steinberger (@steipete, now at OpenAI) built ClawSweeper, a tool that runs 50 Codex instances in parallel around the clock, scanning GitHub issues and PRs and closing what’s already been implemented or doesn’t make sense.

It closed 4,000 issues in a single day.

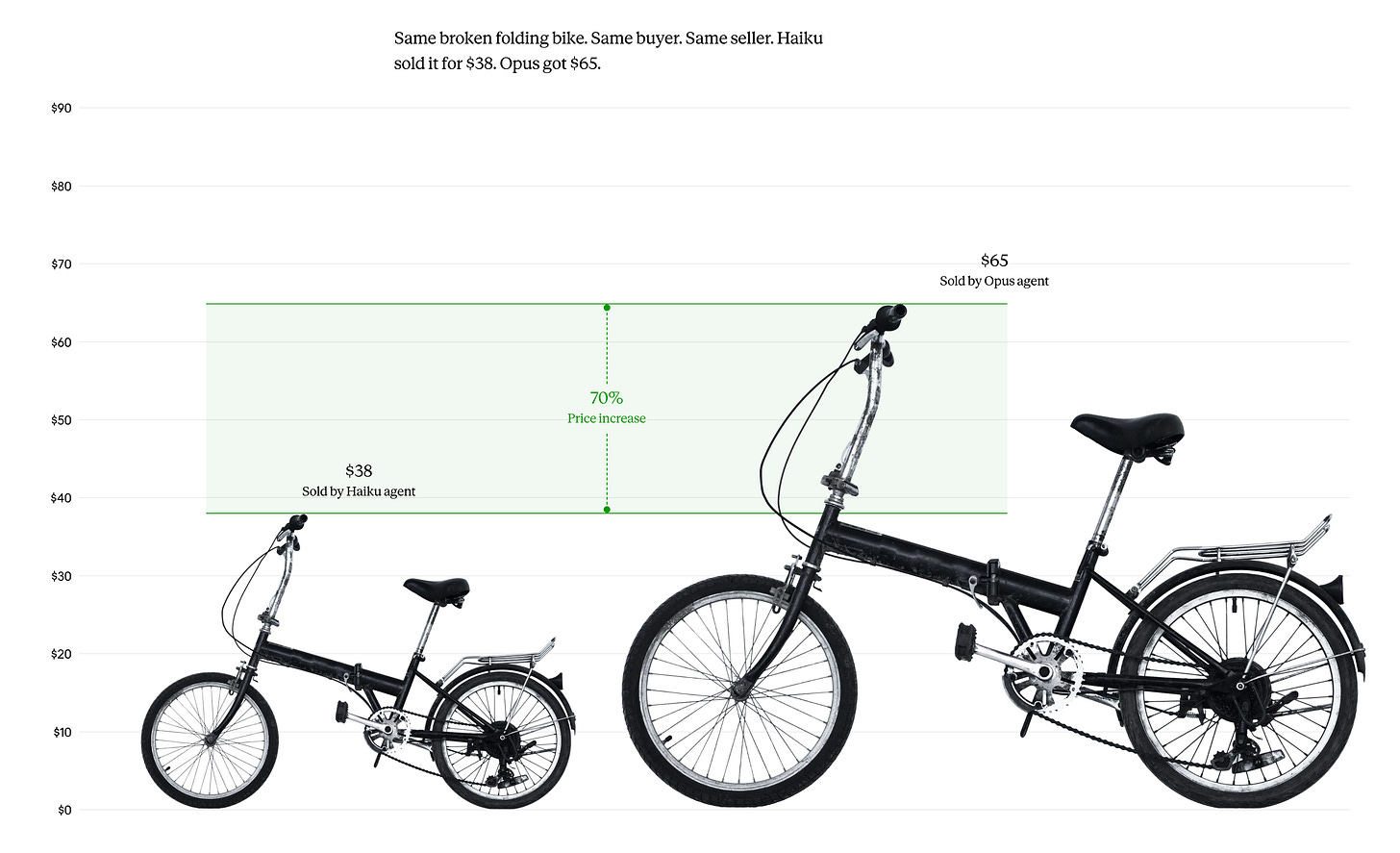

Readme is the new dashboard. You don’t need a dashboard because you won’t really need human oversight.

And then there’s Anthropic’s Project Deal, an experiment where Claude agents negotiated and closed 186 marketplace transactions on the behalf of employees without any human stepping in. The striking part: when Anthropic surveyed participants afterward, people that were given the more powerful model (Opus vs Haiku) got much better deals, but those whose agents had been secretly downgraded to Haiku didn’t realize they’d gotten worse outcomes. They were just as satisfied as the Opus group. They had no way of knowing their agent was less capable because they never saw the negotiation happen.

Is this the implication? That in a world where agents transact on your behalf, the quality of your model becomes an invisible advantage? So the people who can afford the best agents get better economic outcomes, and the people who can’t don’t even know they’re losing.

Probably sooner rather than later, humans won’t really be in the loop at all. We’ll just be on the sidelines watching and observing agents at work, executing tasks, making decisions on our behalf.

What do you think?

Enjoy the edition.

Tara

THE DOWNLOAD

Google Commits Up to $40B in Anthropic; Amazon Adds $5B Days Earlier

Google committed up to $40B in Anthropic, with $10B in cash now at a $350B valuation and $30B tied to performance milestones. Days earlier, Amazon pledged another $5B with an option for $20B more.

Why it matters: Hot take… frontier-model growth financing now runs through cloud infrastructure, not venture capital. Google and Amazon are each committing tens of billions not for board or company control but to stay close to compute demand. The capital required to compete at this scale is pulling frontier labs into permanent cloud partnerships that no traditional funding round can match.

SpaceX Secures Option to Acquire Cursor for $60B

SpaceX announced a deal giving it the right to acquire AI coding startup Cursor for $60B later this year, or pay $10B for the collaboration. The partnership routes Cursor’s models through xAI’s Colossus training cluster. The deal preempted Cursor’s $2B private fundraise and is structured to close after SpaceX’s planned IPO this summer.

Why it matters: The deal is best understood as an IPO play. SpaceX filed confidentially with the SEC in April targeting a June listing at $1.75T. Attaching Cursor lets SpaceX pitch itself as an AI company to public investors, not just rockets and satellites. The underlying need is real: after merging with xAI, SpaceX has a million-GPU supercomputer but no competitive AI product. Recently, all 11 original xAI cofounders have left the company. Cursor gives SpaceX a revenue-generating product in the most lucrative AI category, an A+ AI team, and a reason for Wall Street to assign AI-grade multiples.

DeepSeek V4 and GPT-5.5 Ship Within The Same Day

OpenAI shipped GPT-5.5 on April 23; DeepSeek dropped V4 Preview the next day. Both feature 1M-token context windows. DeepSeek V4 Pro (1.6T total parameters, 49B active) matches or approaches frontier closed models on coding and reasoning benchmarks at roughly one-sixth the cost. Builders have been dropping insane gaming graphics with OpenAI’s Image-2, check out the Time Machine Explorer by Pietro Schirano below.

Why it matters: DeepSeek’s pricing (30x cheaper) puts direct pressure on closed-lab costs. Interestingly, V4 is optimized for and served on Huawei Ascend infrastructure, though training likely still relied in part on NVIDIA GPUs.

Google Splits Its TPU Line Into Dedicated Training and Inference Chips

At Cloud Next, Google announced its eighth-generation TPUs as two separate architectures: TPU 8t for training and TPU 8i for inference. The inference chip triples on-chip SRAM and introduces a new collective acceleration engine and network topology, all designed around serving mixture-of-experts models to millions of concurrent agents.

Why it matters: AWS split training and inference silicon years ago, but Google’s 8i is the first chip designed from the ground up around agentic workloads. The architecture signals that AI infrastructure might be shifting from how fast you can train a model to how cheaply you can serve millions of agents running it simultaneously.

Chinese Institutions Lead ICLR 2026 Accepted Papers by a Wide Margin

ICLR 2026 authorship data shows Chinese universities claiming the top spots in accepted papers: Tsinghua (4.23%), Shanghai Jiao Tong (3.07%), Peking (2.96%), Zhejiang (2.82%). US institutions trail with MIT at 2.22% and Stanford at 2.2%. Singapore and South Korea are matching the entire EU-27 in output.

Why it matters: Singapore and South Korea are now matching the entire EU-27 in accepted paper contributions. Tsinghua alone has nearly double MIT's share. Publication share could be a leading indicator of where talent and capability concentrate.

DEEP DIVE FROM THE REVIEW

The Vercel security breach last week wasn’t about a stolen password or a phishing attack. It was about something worse: a permission you gave once, forgot about, and can’t see anymore.

Last Sunday, 2.4 million websites were put at risk through one stale OAuth token from an AI tool nobody was even using.

Strange Research Fellow Joy Yang maps why this was an expected outcome of current OAuth architecture, and what a fix could look like.

Read on👇