Hands Down, the Hardest Problem in Robotics

Humanoid robots can walk, run, even do backflips. What they can't do reliably: pick up a screwdriver. The hand is now the gating constraint for the entire industry.

Hands are to humanoids what last-mile delivery is to logistics.

It might be a small fraction of the system, but it harbors a disproportionate share of the cost and failure. It is the actual bottleneck holding us back from the last mile of robotic utility.

Recent narratives around humanoids have largely focused on robotic foundation models, reasoning, locomotion, aesthetics. But zoom out a little and the picture shifts. It is the robotic hand that’s become the gating constraint for humanoid robots, and I believe that the companies that solve it will define the next phase of the industry.

Hands Are Miserable To Build

The hand causes the highest difficulty for humanoids due to three high-impact, compounding problems: cost, complexity, and failure density.

According to Morgan Stanley’s analysis of Tesla Optimus Gen-2, hands account for approximately 17% of the total bill of materials cost (roughly $9,500 out of a $50,000–60,000 unit). That’s a disproportionate share for a subsystem that represents a small fraction of robot mass.

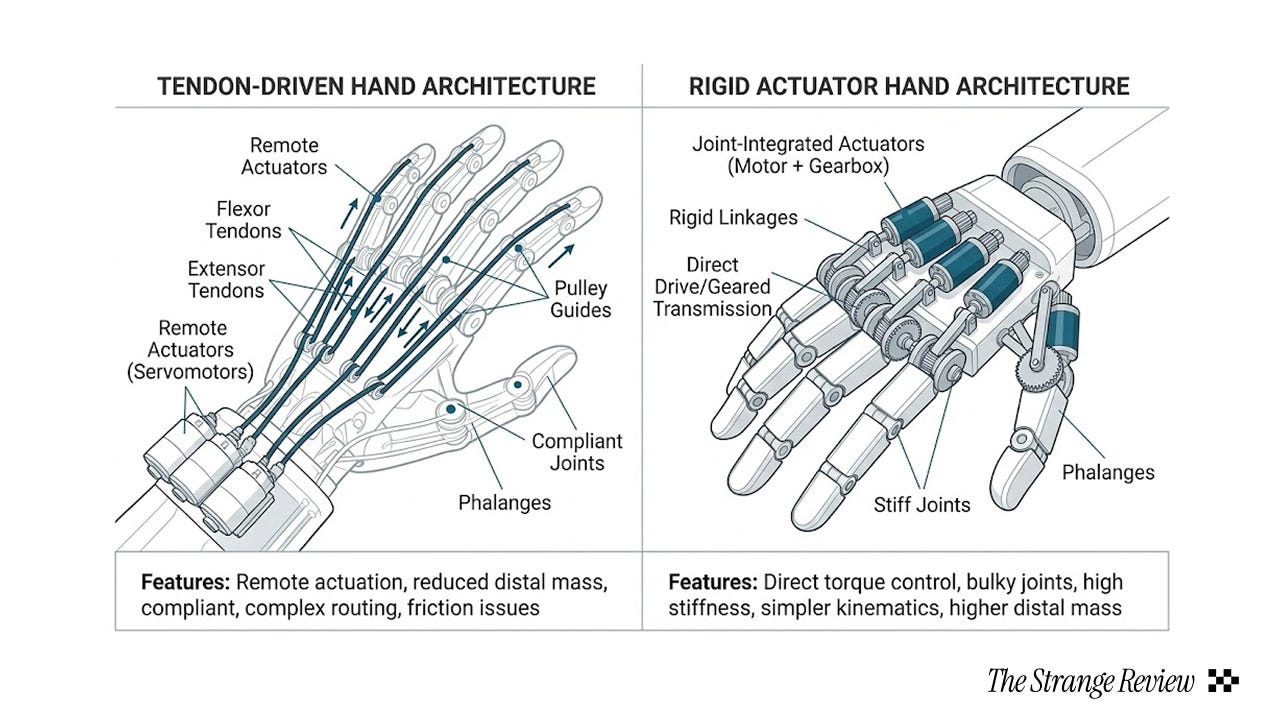

The complexity is equally stark. Optimus Gen 3’s hand has 22 active degrees of freedom, compared to human hands at 27 DoF. Each leg joint chain has 6 to 7 DoF, meaning the hand alone has 3 to 4x higher control dimensionality per limb. The Gen 3 design relocates actuators into the forearm via tendon-driven cables, mimicking human anatomy but adding mechanical complexity and failure modes.

This shows up directly in performance. While humanoid robots achieve nearly 100% success rates grasping simple objects like apples and tennis balls, success rates plummet to around 30% for complex items such as spoons, screwdrivers, or scissors.

Actuation and Sensing

The hand bottleneck is actually two bottlenecks stacked on top of each other. They have different root causes, different solution paths, and potentially different investable layers.

The actuation problem is mechanical. More than 70% of humanoid robot hands rely on tendon-driven or hybrid actuation, while most of the rest of the robot uses simpler rigid motors. The reason: rigid actuators break down the moment contact gets unpredictable, when objects slip, deform, or change shape. Tendons offer compliance but add complexity and failure modes.

The sensing problem is informational. Vision sensors (RGB, RGB-D, LiDAR) scale linearly with cost and compute. Tactile sensing does not. In the MERPHI hand survey, fewer than 50% of humanoid-native hands have fully integrated tactile sensing, and those that do typically sacrifice payload, speed, and / or manufacturability.

This is why recent platforms have been quietly reallocating budget toward the hand subsystem: palm cameras (Figure-03), fingertip tactile arrays (Helix 02), tendon-driven compliance (Optimus). These look like quirky upgrades. They’re actually attempts to stabilize the highest-failure subsystem of the robot.

This shows up directly in deployment failure rates.

A Morgan Stanley ecosystem analysis of humanoid robots shows that dexterity and fine manipulation are the top two bottlenecks to scaling, alongside power. Walking isn’t even considered a gating issue for pilot deployments anymore. Real-world use cases like logistics and manufacturing are overwhelmingly restricting humanoid usage to navigation and pick-and-place tasks.

Contrary to popular belief, it’s not because AI reasoning is limited either(see Dexterous Manipulation Benchmarks and RoboManipBaselines).

The same conclusion is reached when we look specifically at Chinese humanoid companies, who, while arguably ahead, have not solved the hand bottleneck either. This might be why almost all publicly released demos of their humanoids are of full-body athletic tasks with little hand-specific manipulation.

UBTech’s Walker S2 represents the max-DoF approach: 52 total degrees of freedom, 15 DoF per hand, Gen-4 bionic hands, continuous 24/7 operation via autonomous battery swapping. Impressive specs, right? Despite all this, Walker’s public demos and deployment targets emphasize logistics handling and inspection only. They don’t touch deformable or tool-based manipulation because even at 52 DoF, hand reliability remains the limiting factor.

Unitree has the opposite problem. They’ve achieved locomotion performance comparable to Boston Dynamics at orders of magnitude lower cost, with entry-level humanoids priced as low as $6,000. But hand payloads are limited to 2 to 3 kg, active DoF are reduced, and task space is severely constrained despite extremely capable motion stacks.

Fourier Intelligence’s GR-1, China’s first full-scale humanoid, targets rehabilitation and assisted manipulation, prioritizing safe grasping. They avoid high-speed or high-force manipulation altogether. That’s because they too fail in the department of controlled contact.

If hands are the bottleneck, who captures the value from solving them?

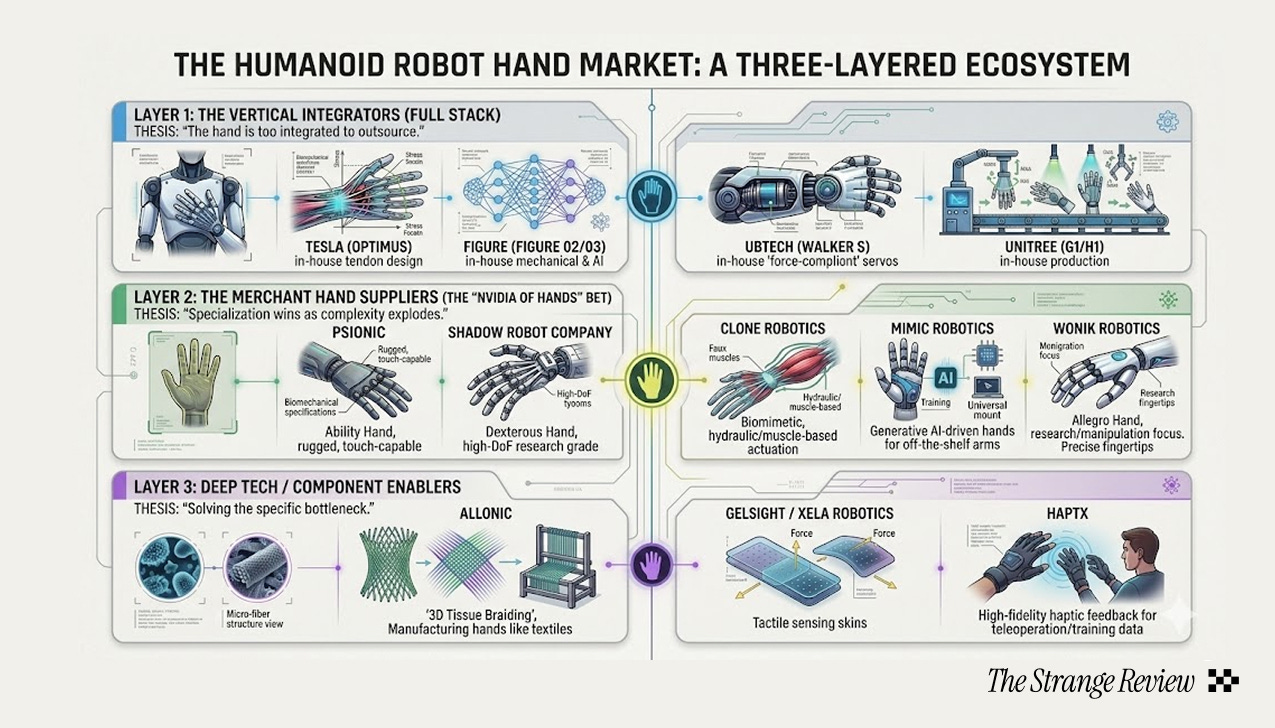

One possibility is that hands remain vertically integrated. Each humanoid company develops proprietary solutions, and hand quality becomes a differentiator baked into the full-stack robot. This is the current default. Tesla, Figure, UBTech, and others are all developing hands in-house.

But vertical integration has limits. The actuation and sensing problems are deep enough that specialist suppliers may emerge, like companies focused purely on tendon-driven mechanisms, high-density tactile arrays, or compliant gripper architectures.

The question is whether the hand subsystem is modular enough to support that kind of layer separation, or whether integration with the rest of the robot’s control stack makes standalone “hand companies” unviable.

We don’t have a strong view yet. But the companies betting hardest on solving hands, whether through actuation innovation, sensing density, or both, are the ones to watch.

Joy Yang is a Strange Research Fellow. She is pursuing computer science and government at Oxford, and is a researcher with its Visual Geometry Group. She was previously an intern with OpenAI and Google.

Yes, It’s a serious technology barrier to the potential of humanoids. Here is my initial findings: https://www.the-waves.org/2020/08/04/robots-take-over-jobs-which-hands-to-prevent-them/

Great piece. The hand-as-bottleneck framing is spot on. I think the deeper issue goes beyond hardware though. What makes contact-rich manipulation so hard is that it requires fine-grained prediction of physical interaction: what happens when fingers close around something deformable, when grip force needs to adjust in real time, when a tool slips mid-use. Current robotics foundation models are getting decent at gross manipulation, but the moment you need to predict what's happening at the contact surface between a fingertip and a screwdriver, you need a level of physical simulation nobody's cracked yet. Better hands alone won't get us there without the predictive models to drive them.