AI Swallows Software Whole

This week, both Anthropic and Google launched or teased features that collapse entire software categories into model-native capabilities.

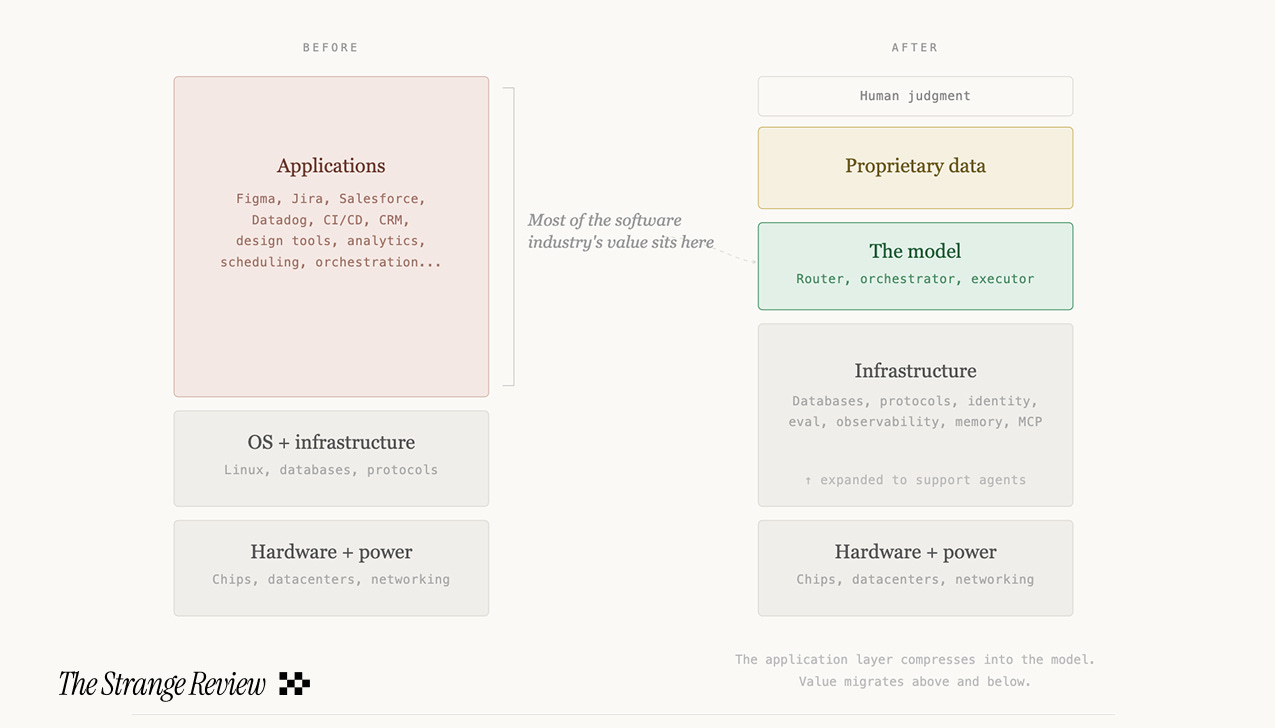

For forty years, the computing stack has had a stable shape: hardware at the bottom, operating systems and infrastructure in the middle, applications on top. The application layer is where most of the software industry’s value has sat. Each application is a product, sold by a company, with its own sales cycle, implementation, and license.

What we are seeing now is the sudden, and very intense, compression of that application layer into the model directly.

AI eats software. Or rather, swallows software whole. Model makers don't just want to be the engine. They want to be the car.

This week alone, both Anthropic and Google launched or teased features that collapse entire software categories into model-native capabilities.

From Anthropic:

Managed Agents: A cloud runtime for autonomous AI agents. Anthropic handles sandboxing, state, and orchestration.

Advisor Tool: A cheap model runs the task and routes to an expensive model to review the hard decisions.

Routines: Scheduled agent automations. Trigger on a cron, a GitHub event, or an API call.

Opus 4.7 and an AI design tool: A new model and a leak of a website and slides design tool.

From Google / Gemini:

Gemini for Mac: A native macOS app with Gemini. Share your screen for contextual help on whatever you are looking at, including local files. Read access to your desktop, with full control likely coming (probably their answer to Computer Use).

Skills: Reusable prompts for Gemini in Chrome. Google provides a pre-built library across productivity, shopping, and research categories.

Agent Mode: The model breaks a complex goal into steps and executes them across Gmail, Calendar, Maps, and the open web.

The model is not just being integrated into the software stack through API keys. The model is collapsing the software stack into itself.

The New Stack

The model does not replace the infrastructure (yet). The model does not replace the data (yet). Proprietary datasets, accumulated customer records, specialized corpuses, these become more valuable, not less, because the model makes them more actionable.

What the model replaces is the application between the infrastructure and the human.

The model becomes the router. You describe a goal. The model determines which capabilities it needs, in what sequence, with what parameters. It calls tools through MCP, executes code in sandboxed environments, reads and writes files, browses the web, and reports back.

This is what Gemini’s Agent Mode does when it breaks a trip-planning request into flight search, hotel comparison, and calendar entry. It is what Claude’s Routines do when they pull the top bug from a tracker at 2 AM, attempt a fix, and open a draft PR.

The human sets the objective. The model selects and sequences the tools.

Each software product that the model can invoke as a capability, now recedes behind it. The user no longer opens Figma to do design work. The user tells the model to produce a design. The product disappears behind the prompt.

Its data and its specialized functions may persist, but its interface, its brand, its direct relationship with the user, compress into an MCP server that the model calls.

Swallowed whole.

So what doesn’t compress?

Hardware, compute, and power. The physical layer the model runs on. The further up the stack the model reaches, the more value pools at the bottom.

Deterministic systems and proprietary data. Databases, transaction processing, financial ledgers: anything where exact reproducibility matters more than judgment.

I predict an interesting inversion will happen: software companies that currently derive value from their product interface will increasingly derive value from the data behind it. Pinterest’s moat is not the pin board. It is one of the largest labeled visual datasets of human aesthetic preference ever assembled. The product recedes. The data persists. We will see software companies become data companies whether they intend to or not.

The operational layer around the agent. Identity, permissions, compliance, evaluation, observability, memory, context management. Every agent that acts on a human’s behalf needs to prove it is authorized, needs to be audited, needs to be tested, and needs to remember what it has done.

And above everything: human judgment and liability. That is what human jobs will increasingly look like: not building the product, but specifying what the product is allowed to do, connecting it to the data it needs, and taking responsibility for whether the agent did the job correctly.

The Market For Software Will Grow, And Consolidate

The total market for software does not shrink. It likely grows by multiples. You can now do so much more with software than ever before. But the spend will consolidate radically. Instead of hundreds of SaaS vendors, the market tilts toward a small number of model platforms.

Each model maker is building towards a proprietary ecosystem: Anthropic has MCP connectors, Claude Code Skills, Cowork plug-ins, Managed Agent configurations, and Routines. Google has Gemini Skills, Agent Mode, Workspace integrations, Antigravity, and soon AutoBrowse.

While these standards are theoretically open, the workflows accumulated over time are not.

What I mean: your Claude Routines do not run on Gemini. Your Cowork plug-ins do not work in ChatGPT. The lock-in is not in the data, which lives in your own systems. The lock-in is in the operational context: how your team has learned to work with this specific model, the configurations they have built, the habits they have formed. This may prove stickier than data lock-in ever was, because it is distributed across an organization’s muscle memory rather than centralized in an exportable database.

Lock-ins have trade-offs. When Anthropic absorbed features from the open-source agent framework OpenClaw and then restricted third-party auth access, thousands of community investment into building these workflows became stranded overnight. They might suddenly turn on KYC, which they did today. The workflows are beholden to the model maker’s rules.

Where does the model’s appetite end?

I read a paper by a research team from Meta AI and KAUST published this month proposing what they call Neural Computers: a machine form in which the model does not merely replace the application layer but absorbs the computer itself, unifying computation, memory, and I/O into a single learned runtime.

Their prototype, trained on screen recordings of Ubuntu desktops, can render interfaces and respond to user actions.

While the vision is a decade or more from realization, the question it asks is the logical extension of what is already happening in production: if the model has absorbed the software, why shouldn’t it absorb the machine?

For now, it doesn’t have to be the computer. It just has to run it.